Floating Points Live at HERE at Outernet

As Technical Lead for Dimension LIVE, Oliver developed a bespoke media server system integrating TouchDesigner, Unreal Engine, and GrandMA for real-time control of both physical and virtual DMX lighting fixtures. In collaboration with Hamil Industries and artist Akiko Nakayama, he helped deliver the first-ever live show to feature real-time virtual lighting and stage extension on the venue’s LED screen, seamlessly blending live performance with immersive virtual elements.

As Technical Lead for Dimension LIVE, Oliver developed a bespoke media server system integrating TouchDesigner, Unreal Engine, and GrandMA for real-time control of both physical and virtual DMX lighting fixtures. In collaboration with Hamil Industries and artist Akiko Nakayama, he helped deliver the first-ever live show to feature real-time virtual lighting and stage extension on the venue’s LED screen, seamlessly blending live performance with immersive virtual elements.

Tech: TouchDesigner, TouchEngine, Unreal Engine, GrandMA

Credits

- Artist: Floating Points

- Visuals: Akiko Nakayama | Hamill Industries

- Lighting and Stage Design: Ed Warren | Will Potts (Lighting Stage Design)

- Photographer: Genevieve Reeves | Seb Gardner

- Eat Your Own Ears: Promoter

- LIVE VP Integration: Dimension LIVE

- Technical Lead: Oliver Ellmers

As Technical Lead for Dimension LIVE, Oliver developed a bespoke media server system integrating TouchDesigner, Unreal Engine, and GrandMA for real-time control of both physical and virtual DMX lighting fixtures. In collaboration with Hamil Industries and artist Akiko Nakayama, he helped deliver the first-ever live show to feature real-time virtual lighting and stage extension on the venue’s LED screen, seamlessly blending live performance with immersive virtual elements.

Tech: TouchDesigner, TouchEngine, Unreal Engine, GrandMA

Credits

- Artist: Floating Points

- Visuals: Akiko Nakayama | Hamill Industries

- Lighting and Stage Design: Ed Warren | Will Potts (Lighting Stage Design)

- Photographer: Genevieve Reeves | Seb Gardner

- Eat Your Own Ears: Promoter

- LIVE VP Integration: Dimension LIVE

- Technical Lead: Oliver Ellmers

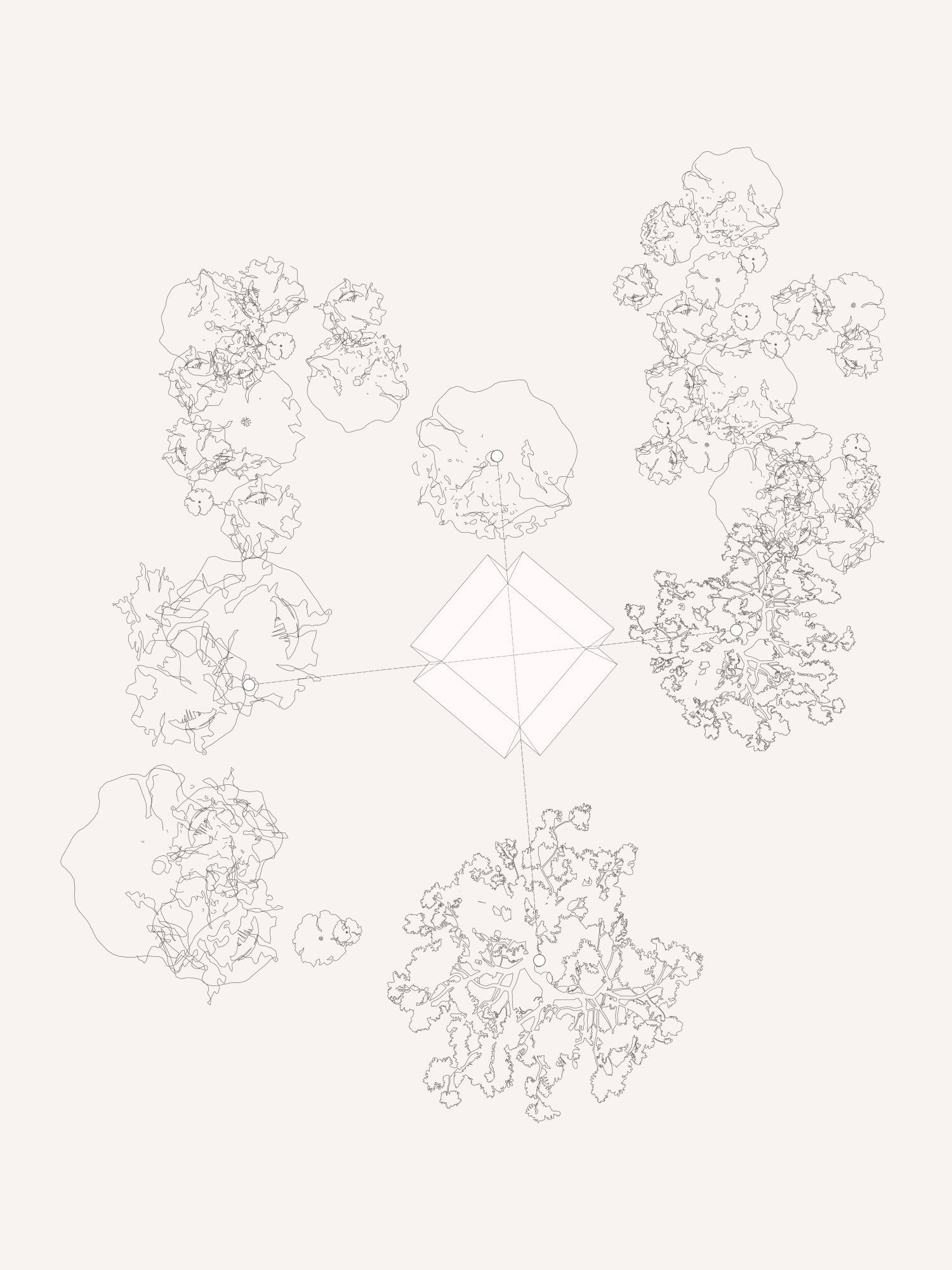

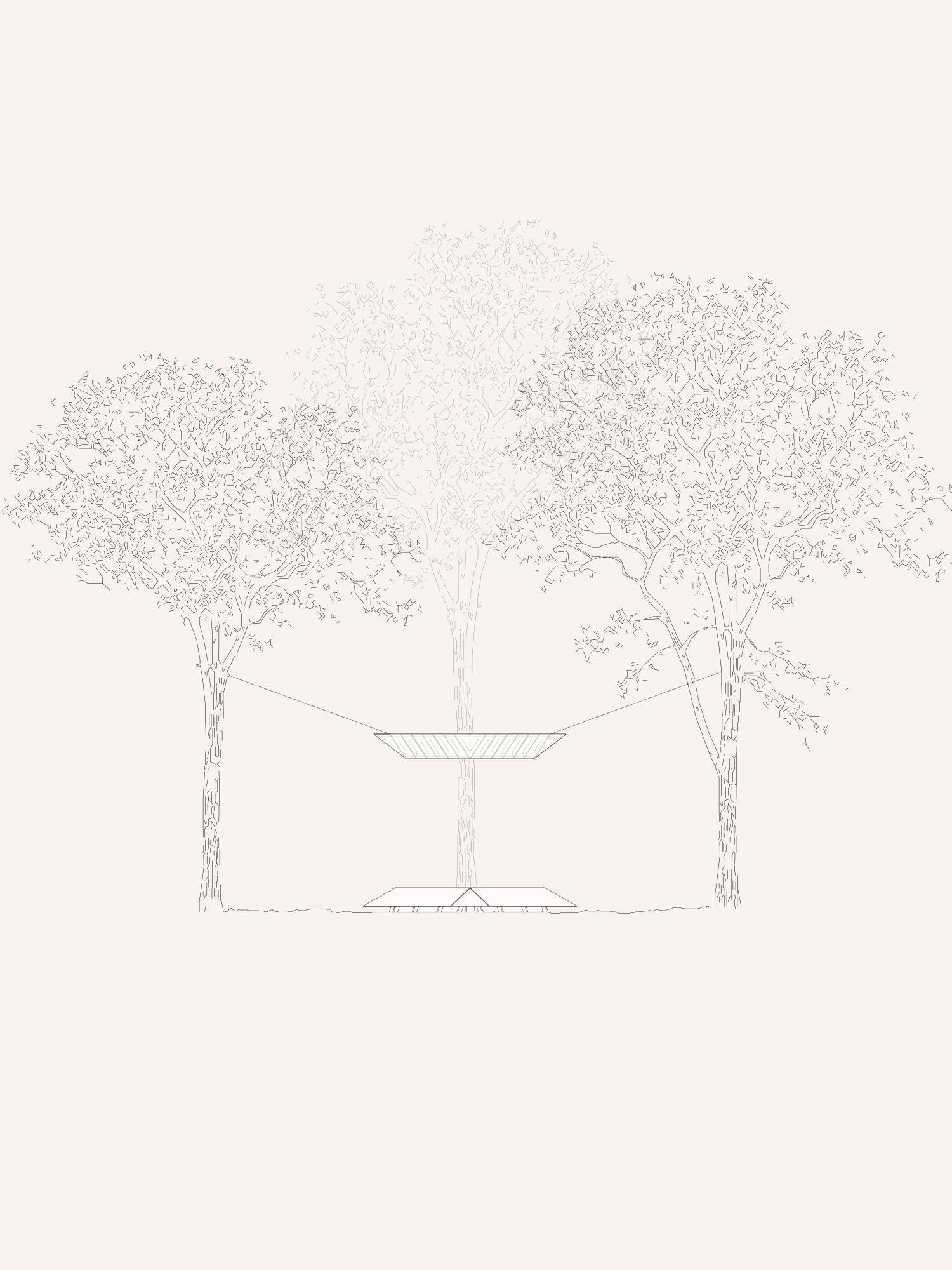

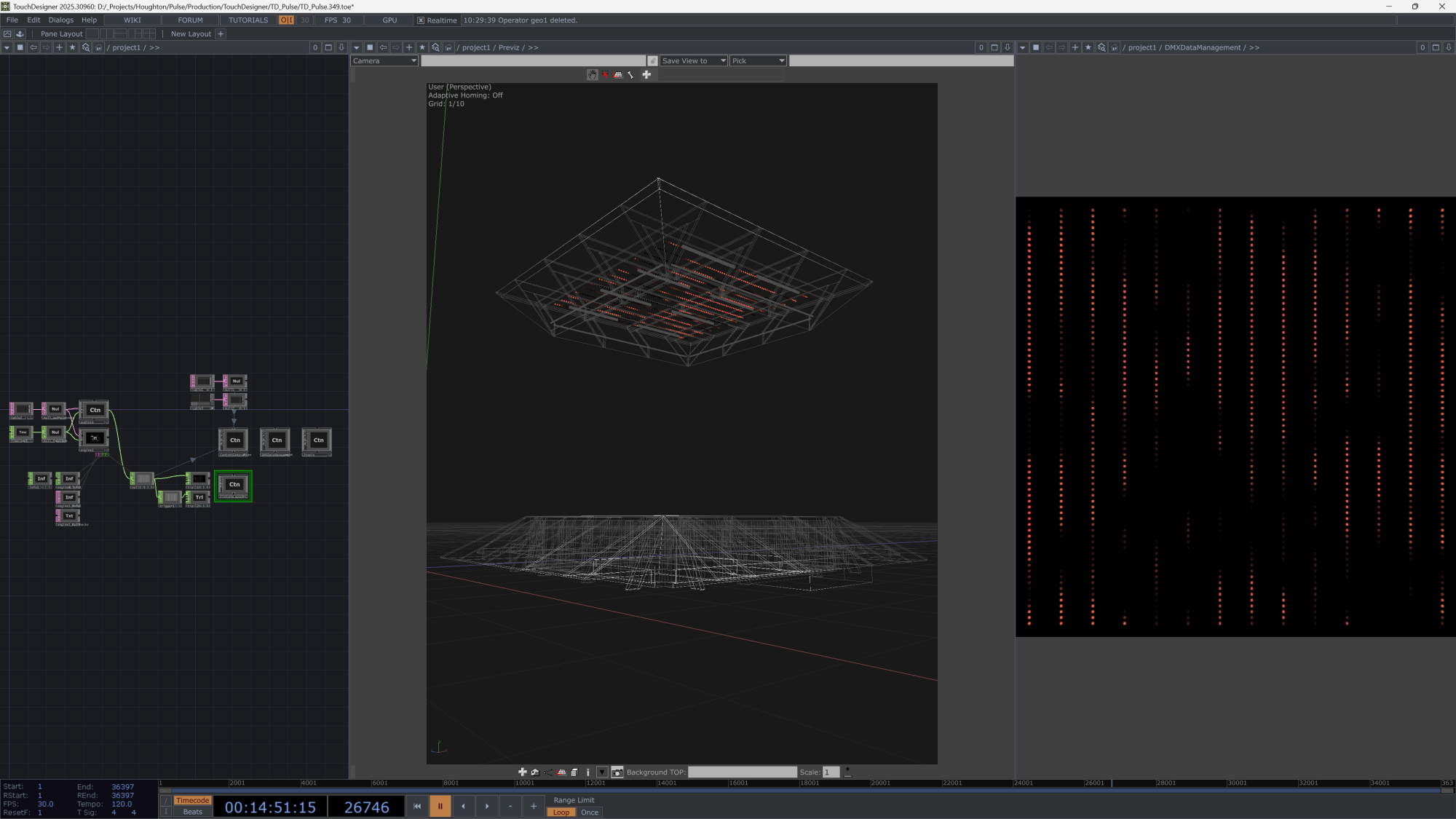

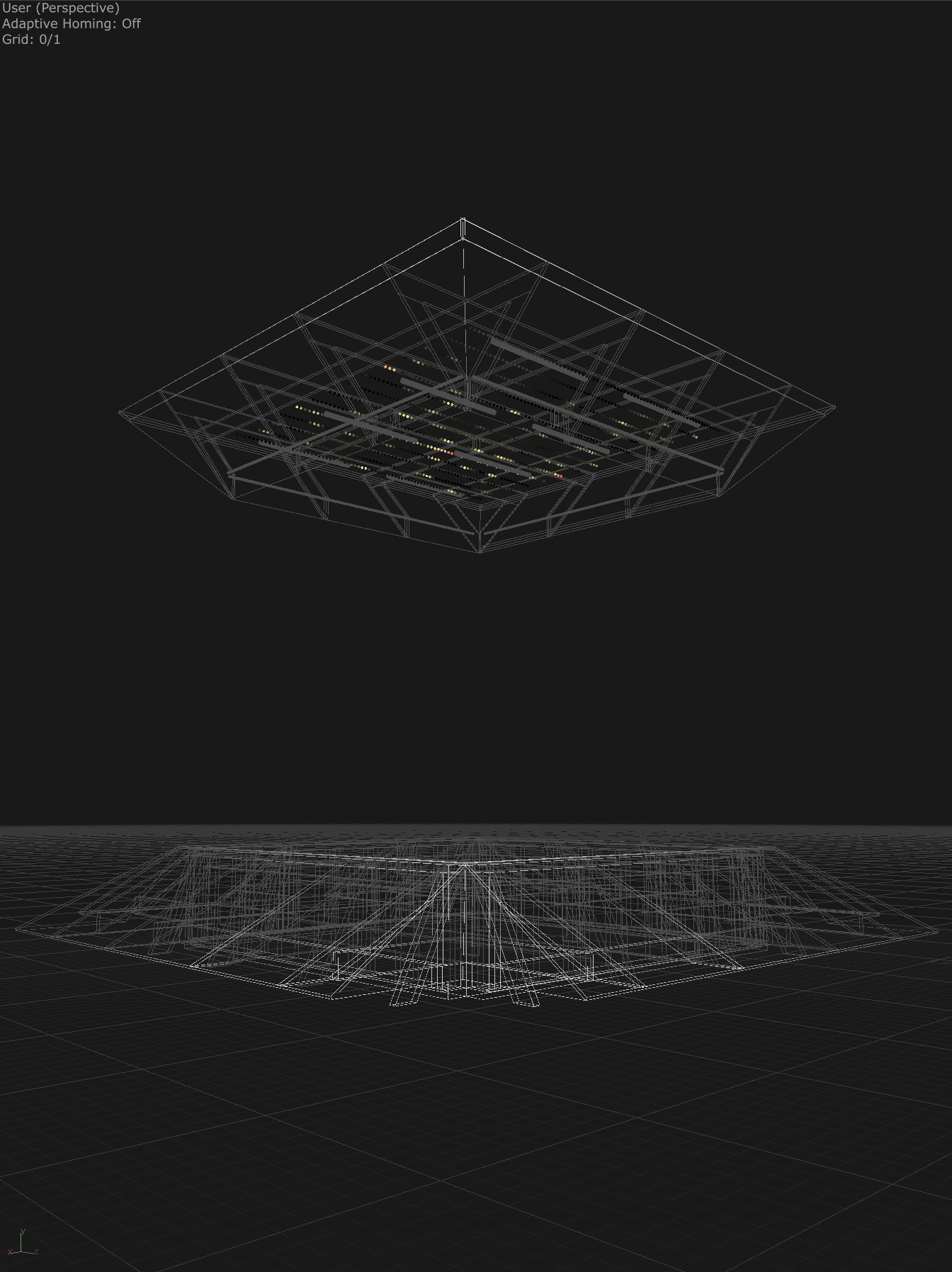

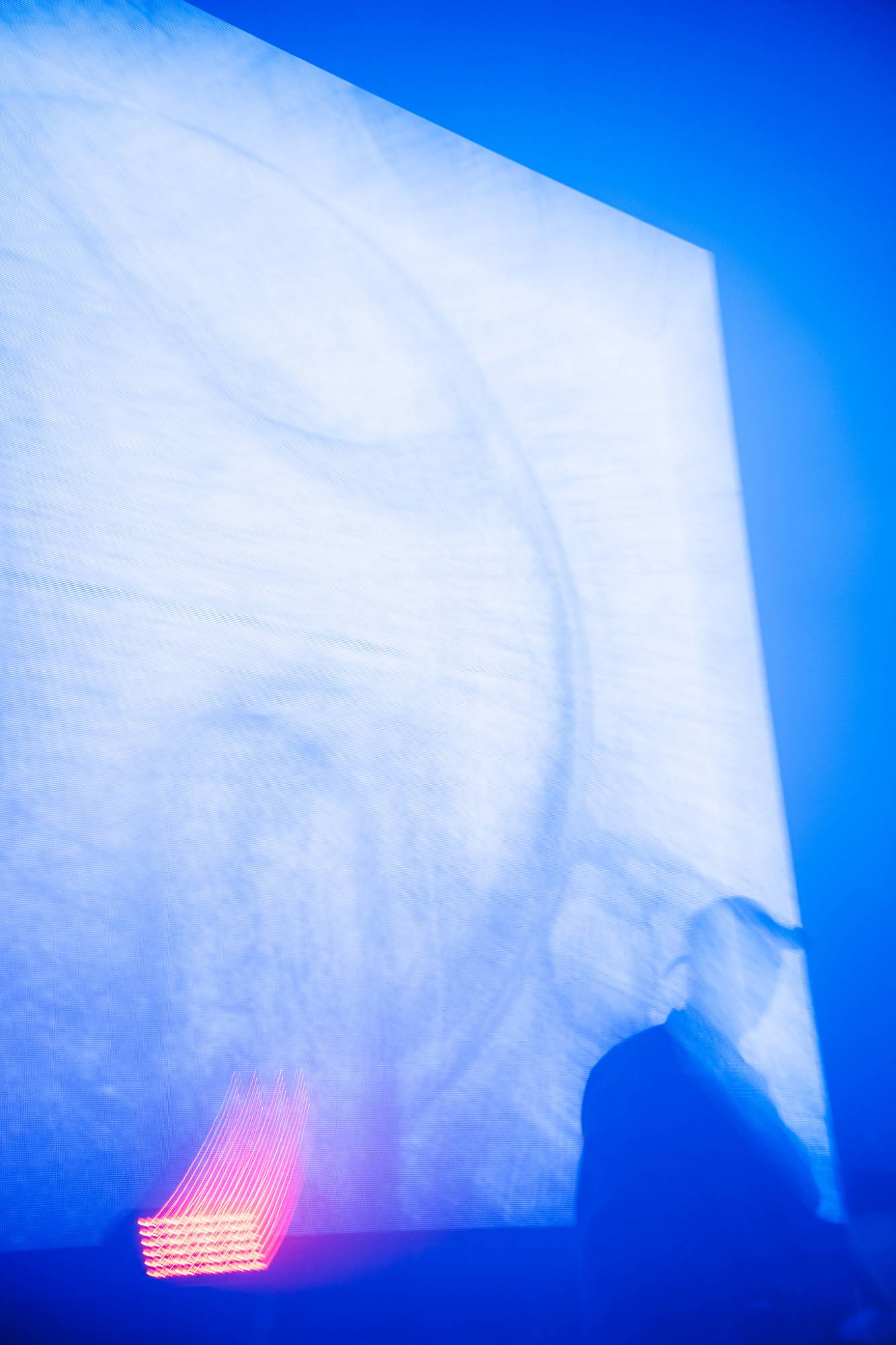

Pulse

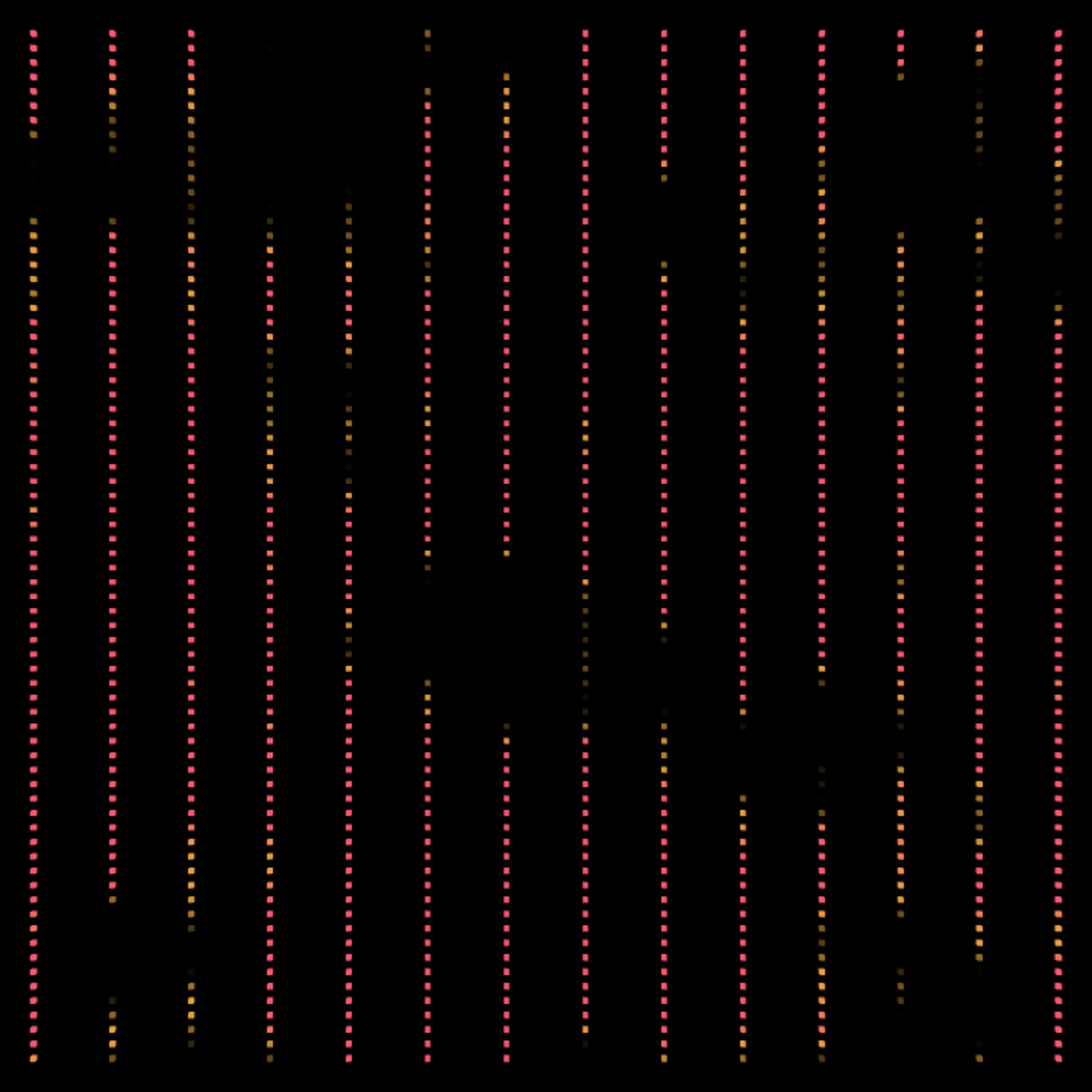

Pulse is an immersive audiovisual installation by EBBA for Houghton Festival 2025, translating the subtle resonance of trees into a living composition of light, sound, and vibration. Working in collaboration with Sound Designer Kevin Pollard and Lead Designer Benni Allan, Oliver Ellmers developed a real-time TouchDesigner control system that unified spatial audio, lighting, and haptic feedback, creating a dynamic and responsive environment within the forest canopy.

Pulse is a new conceptual installation developed by EBBA director Benni Allan for Houghton Festival 2025, exploring the unseen resonance of the forest through light, sound, and vibration. Drawing on environmental sensors embedded within the woodland, the installation captures subtle signals from the surrounding trees and transforms them into a dynamic composition that evolves in real time. The result is a meditative, site-specific experience that bridges human perception and the living environment.

Visitors enter a responsive space where light pulses ripple through the canopy and a multi-channel soundscape moves fluidly around them. Designed to react to environmental data and spatialised sound, the installation reveals the forest’s rhythm through the interplay of light, audio, and physical vibration.

As Lighting Designer and Technical Systems Engineer, Oliver Ellmers was responsible for the real-time integration of the visual and sonic components. Using TouchDesigner, he created a custom interactive control system that ingested 3D spatialised audio composed by Kevin Pollard, and translated it into procedural lighting patterns mapped across the suspended LED canopy. The system analysed live audio streams from the L-Acoustics spatial speaker array, driving both lighting and haptic transducers embedded within the structure to create an enveloping sensory field.

TouchDesigner functioned as the central show control environment, handling real-time audio analysis and signal mapping, procedural lighting generation and pixel mapping, as well as the sequencing and playback of spatial audio created using the L-Acoustics L-ISA system. The platform also managed synchronised Art-Net control, ensuring that light and vibration moved in harmony with the evolving soundscape to create a unified, immersive experience.

Through this tightly integrated workflow, Pulse became a fully responsive, real-time audiovisual system - an interplay of architecture, sound, and technology. The installation continues as a permanent fixture at Houghton, designed to evolve over time with seasonal and environmental changes, becoming a living monument to the forest’s vitality.

Tech: TouchDesigner, L-Acoustics L-ISA

Credits

- Commissioner: Houghton Festival

- Lead Designer: Benni Allan

- Architects: EBBA

- Sound Design: Kevin Pollard

- Fabricators: Our Department

- Advisory Engineers: Arup Engineers

- Engineers: Public House

- Technical Production: Lighthaus Studio

- Lighting Design | Technical Systems Engineer: Oliver Ellmers

- Fabric Seamstress: Kat Rothery and Katie Dufort

- Carpentry: Abwb.carpentry

Pulse is a new conceptual installation developed by EBBA director Benni Allan for Houghton Festival 2025, exploring the unseen resonance of the forest through light, sound, and vibration. Drawing on environmental sensors embedded within the woodland, the installation captures subtle signals from the surrounding trees and transforms them into a dynamic composition that evolves in real time. The result is a meditative, site-specific experience that bridges human perception and the living environment.

Visitors enter a responsive space where light pulses ripple through the canopy and a multi-channel soundscape moves fluidly around them. Designed to react to environmental data and spatialised sound, the installation reveals the forest’s rhythm through the interplay of light, audio, and physical vibration.

As Lighting Designer and Technical Systems Engineer, Oliver Ellmers was responsible for the real-time integration of the visual and sonic components. Using TouchDesigner, he created a custom interactive control system that ingested 3D spatialised audio composed by Kevin Pollard, and translated it into procedural lighting patterns mapped across the suspended LED canopy. The system analysed live audio streams from the L-Acoustics spatial speaker array, driving both lighting and haptic transducers embedded within the structure to create an enveloping sensory field.

TouchDesigner functioned as the central show control environment, handling real-time audio analysis and signal mapping, procedural lighting generation and pixel mapping, as well as the sequencing and playback of spatial audio created using the L-Acoustics L-ISA system. The platform also managed synchronised Art-Net control, ensuring that light and vibration moved in harmony with the evolving soundscape to create a unified, immersive experience.

Through this tightly integrated workflow, Pulse became a fully responsive, real-time audiovisual system - an interplay of architecture, sound, and technology. The installation continues as a permanent fixture at Houghton, designed to evolve over time with seasonal and environmental changes, becoming a living monument to the forest’s vitality.

Tech: TouchDesigner, L-Acoustics L-ISA

Credits

- Commissioner: Houghton Festival

- Lead Designer: Benni Allan

- Architects: EBBA

- Sound Design: Kevin Pollard

- Fabricators: Our Department

- Advisory Engineers: Arup Engineers

- Engineers: Public House

- Technical Production: Lighthaus Studio

- Lighting Design | Technical Systems Engineer: Oliver Ellmers

- Fabric Seamstress: Kat Rothery and Katie Dufort

- Carpentry: Abwb.carpentry

DJ BORING LIVE - TOMORROW NEVER COMES TOUR 25

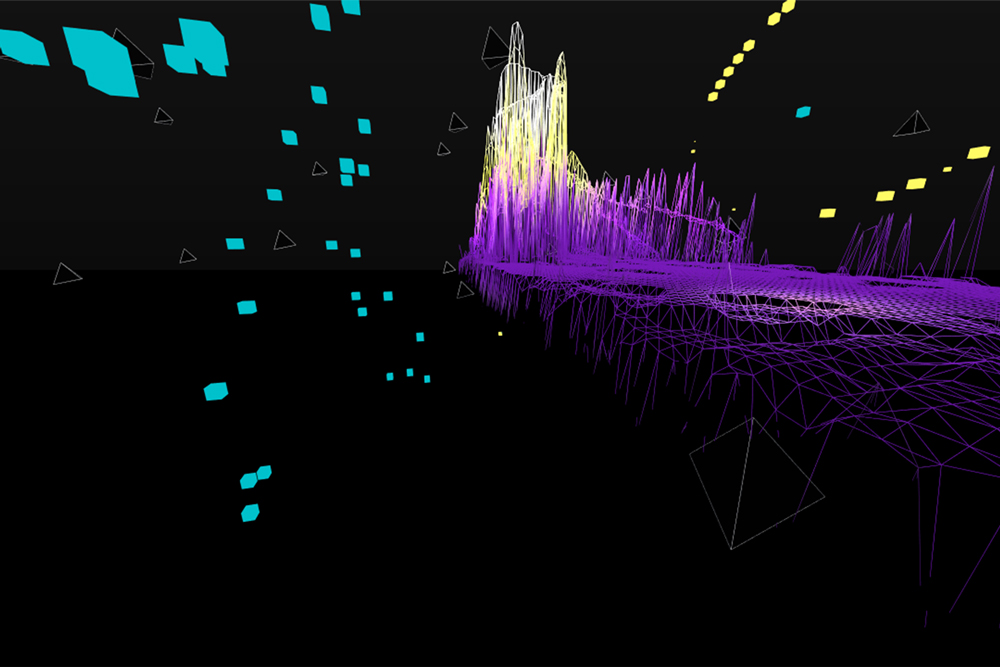

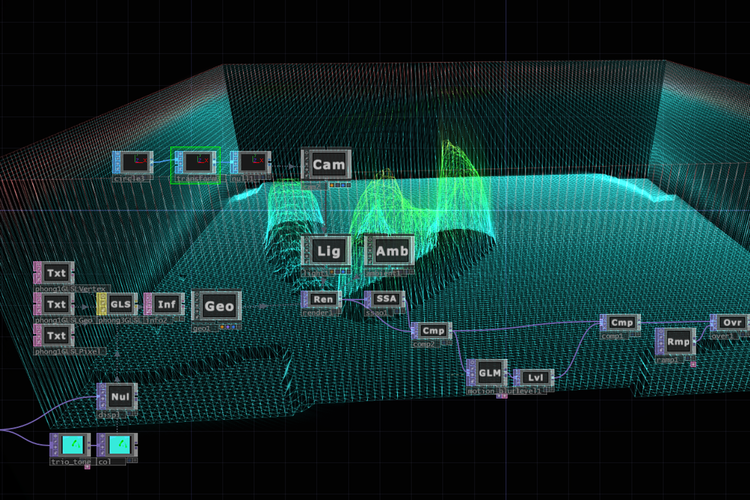

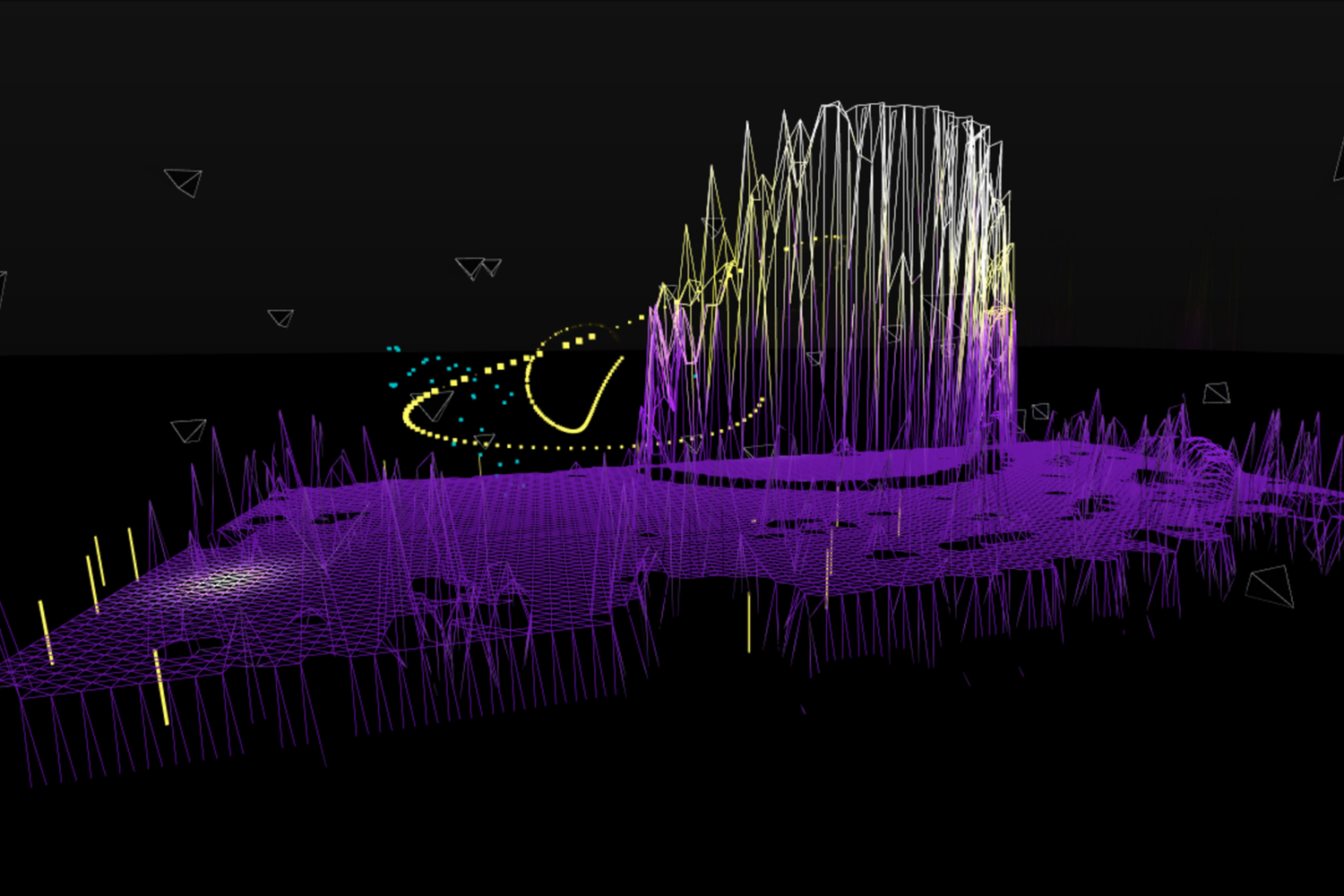

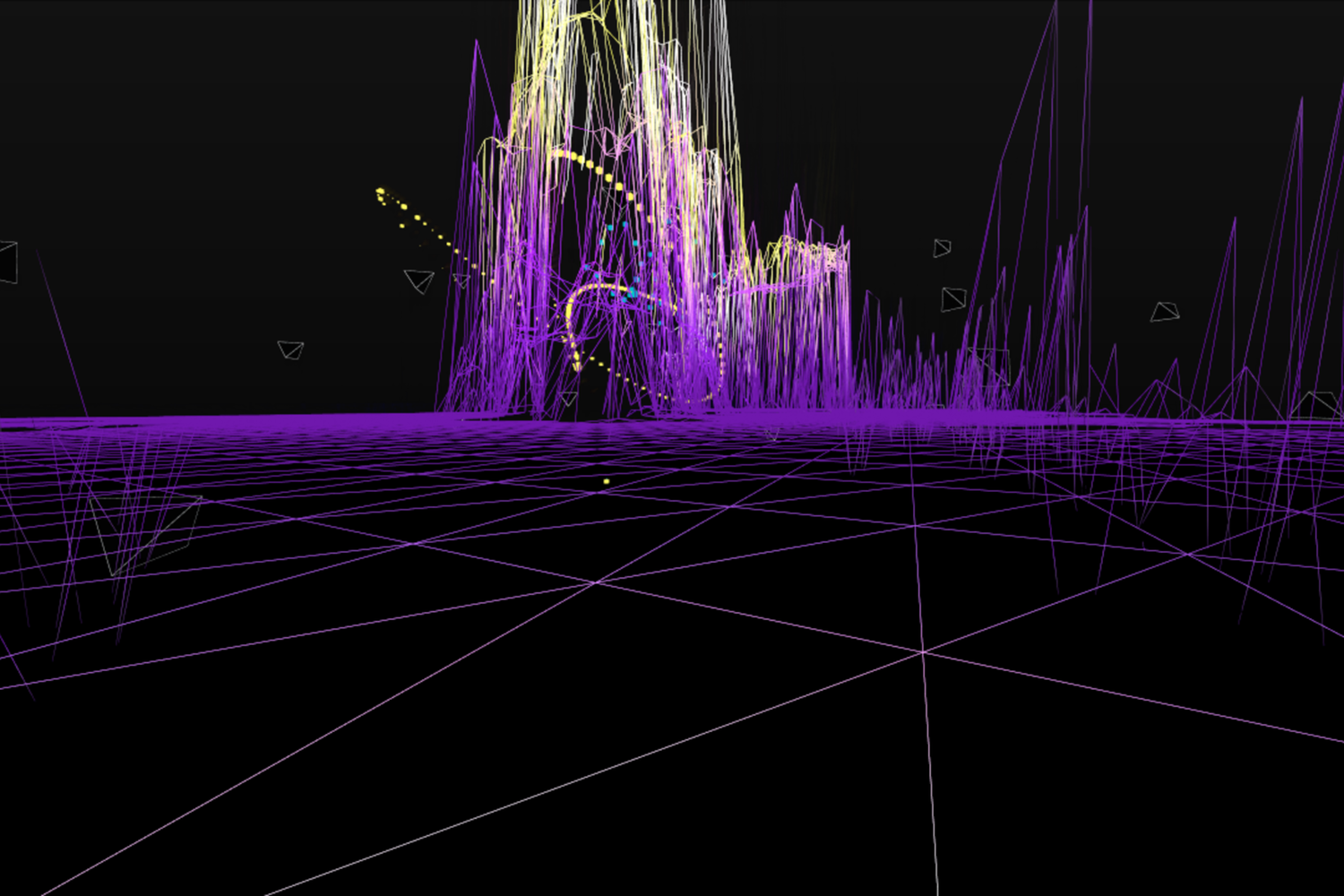

A custom touring audiovisual system designed for DJ BORING’s Tomorrow Never Comes Tour 2025, integrating Ableton Live, TouchDesigner, and Avolites into a single real-time performance environment. Oliver Ellmers led production design, system engineering, and touring operation, developing a tightly synchronised live show that bridges sound, visuals, and lighting.

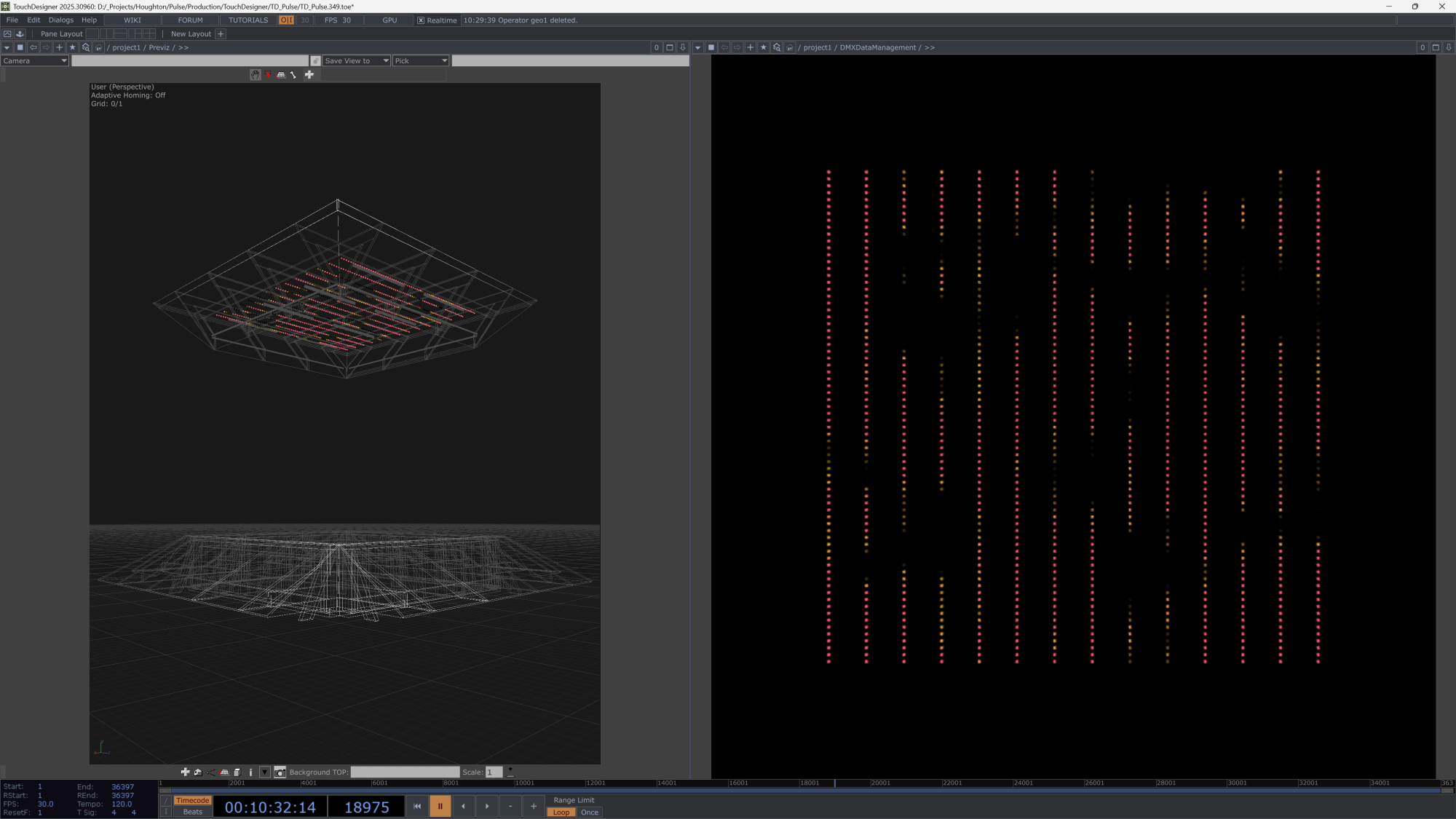

For DJ BORING’s Tomorrow Never Comes Tour 2025, Oliver Ellmers led production design, direction, system engineering, and touring operation, developing a real-time audiovisual framework that connected performance, visuals, and lighting into a unified system.

Working closely with DJ BORING, Oliver designed an integrated live setup linking Ableton Live, TouchDesigner, and Avolites. Real-time MIDI data from DJ BORING’s live Ableton session was streamed directly into TouchDesigner, where a series of GLSL-driven procedural graphics responded dynamically to performance cues, tempo changes, and audio triggers.

A bespoke communication pipeline was developed between TouchDesigner and the Avolites lighting console, enabling the same live MIDI data that drove the visuals to also control lighting behaviour in real time. This ensured perfect synchronisation between DJ BORING’s live performance, the generative visual content, and stage lighting.

The production was designed to be tourable and modular, capable of scaling across different venues and production environments while maintaining consistent creative impact. Collaborating with Lighting Designer Moises Martinez and Re-Production as the production supplier, the team delivered a seamless touring AV show across multiple European venues, culminating in a headline performance at Village Underground, London, in October 2025.

Tech: TouchDesigner, Avolites, Ableton Live

Credits

- Artist: DJ BORING

- Production Design + Direction | Systems Engineer | Graphics Development | Operator: Oliver Ellmers

- Lighting Designer | Operator: Moises Martinez

- Production Supplier: Re-Production

- Photographer: Joshua Hiatt

For DJ BORING’s Tomorrow Never Comes Tour 2025, Oliver Ellmers led production design, direction, system engineering, and touring operation, developing a real-time audiovisual framework that connected performance, visuals, and lighting into a unified system.

Working closely with DJ BORING, Oliver designed an integrated live setup linking Ableton Live, TouchDesigner, and Avolites. Real-time MIDI data from DJ BORING’s live Ableton session was streamed directly into TouchDesigner, where a series of GLSL-driven procedural graphics responded dynamically to performance cues, tempo changes, and audio triggers.

A bespoke communication pipeline was developed between TouchDesigner and the Avolites lighting console, enabling the same live MIDI data that drove the visuals to also control lighting behaviour in real time. This ensured perfect synchronisation between DJ BORING’s live performance, the generative visual content, and stage lighting.

The production was designed to be tourable and modular, capable of scaling across different venues and production environments while maintaining consistent creative impact. Collaborating with Lighting Designer Moises Martinez and Re-Production as the production supplier, the team delivered a seamless touring AV show across multiple European venues, culminating in a headline performance at Village Underground, London, in October 2025.

Tech: TouchDesigner, Avolites, Ableton Live

Credits

- Artist: DJ BORING

- Production Design + Direction | Systems Engineer | Graphics Development | Operator: Oliver Ellmers

- Lighting Designer | Operator: Moises Martinez

- Production Supplier: Re-Production

- Photographer: Joshua Hiatt

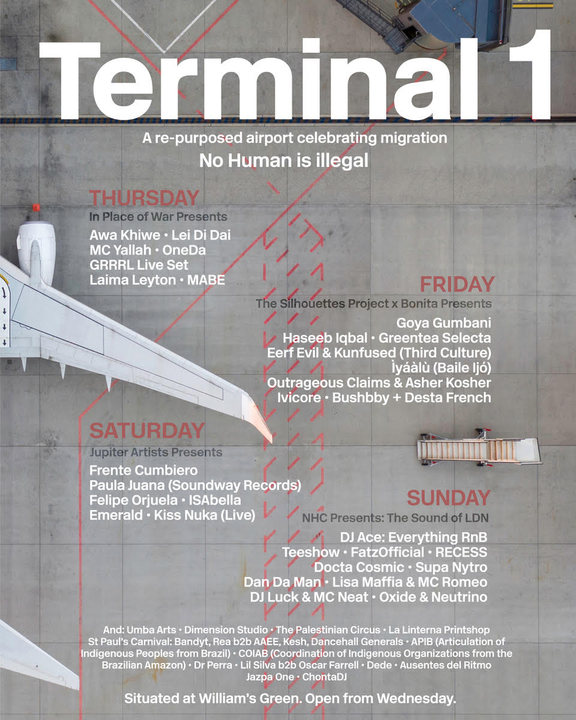

Terminal 1 Air Traffic Control

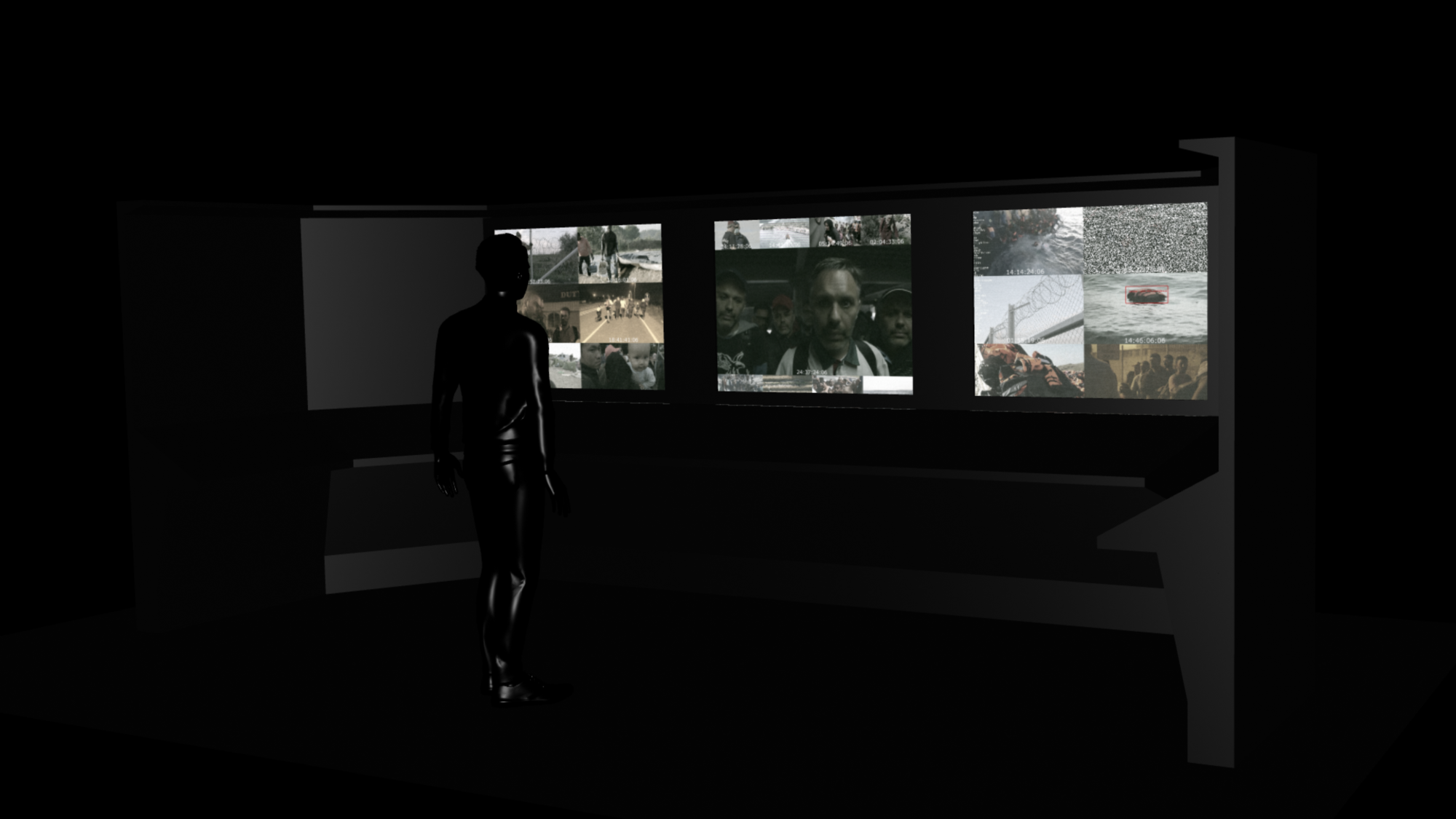

Air Traffic Control is an immersive installation inside Terminal 1 at Glastonbury Festival 2025. A live IP camera, a custom AI face-swap pipeline, and a TouchDesigner control system place each visitor’s face into real footage of migrants in moments of struggle - later revealed on a CCTV wall. A real-time work exposing the pervasive reach of video surveillance through the lens of deepfake AI.

Air Traffic Control investigates video surveillance, synthetic media, and the politics of migration. Designed and engineered by Oliver Ellmers at Dimension Studio for Terminal 1 at Glastonbury Festival 2025, the work begins by capturing each visitor at a border-control checkpoint. Their face is routed through a custom AI face-swap system, and later - without warning - they encounter a CCTV wall where their likeness appears, seamlessly composited into real scenes of migrants under pressure.

The piece frames identity and complicity within the aesthetics of security footage. By inserting the spectator into documented events, Air Traffic Control demonstrates how frictionless tools - capture, inference, broadcast -can recontextualise people in ways that appear authoritative and “true,” even when entirely manufactured.

Ellmers led the technical development and deployment of the work, including TouchDesigner system design, custom AI integration, pipeline orchestration, media I/O, show control, and on-site implementation.

Tech: TouchDesigner, Deepfake AI

Credits

- Technical Lead | System & Integration Engineer: Oliver Ellmers

- Executive Producer: Mark Bustard

- Studio: Dimension Studio

Air Traffic Control investigates video surveillance, synthetic media, and the politics of migration. Designed and engineered by Oliver Ellmers at Dimension Studio for Terminal 1 at Glastonbury Festival 2025, the work begins by capturing each visitor at a border-control checkpoint. Their face is routed through a custom AI face-swap system, and later - without warning - they encounter a CCTV wall where their likeness appears, seamlessly composited into real scenes of migrants under pressure.

The piece frames identity and complicity within the aesthetics of security footage. By inserting the spectator into documented events, Air Traffic Control demonstrates how frictionless tools - capture, inference, broadcast -can recontextualise people in ways that appear authoritative and “true,” even when entirely manufactured.

Ellmers led the technical development and deployment of the work, including TouchDesigner system design, custom AI integration, pipeline orchestration, media I/O, show control, and on-site implementation.

Tech: TouchDesigner, Deepfake AI

Credits

- Technical Lead | System & Integration Engineer: Oliver Ellmers

- Executive Producer: Mark Bustard

- Studio: Dimension Studio

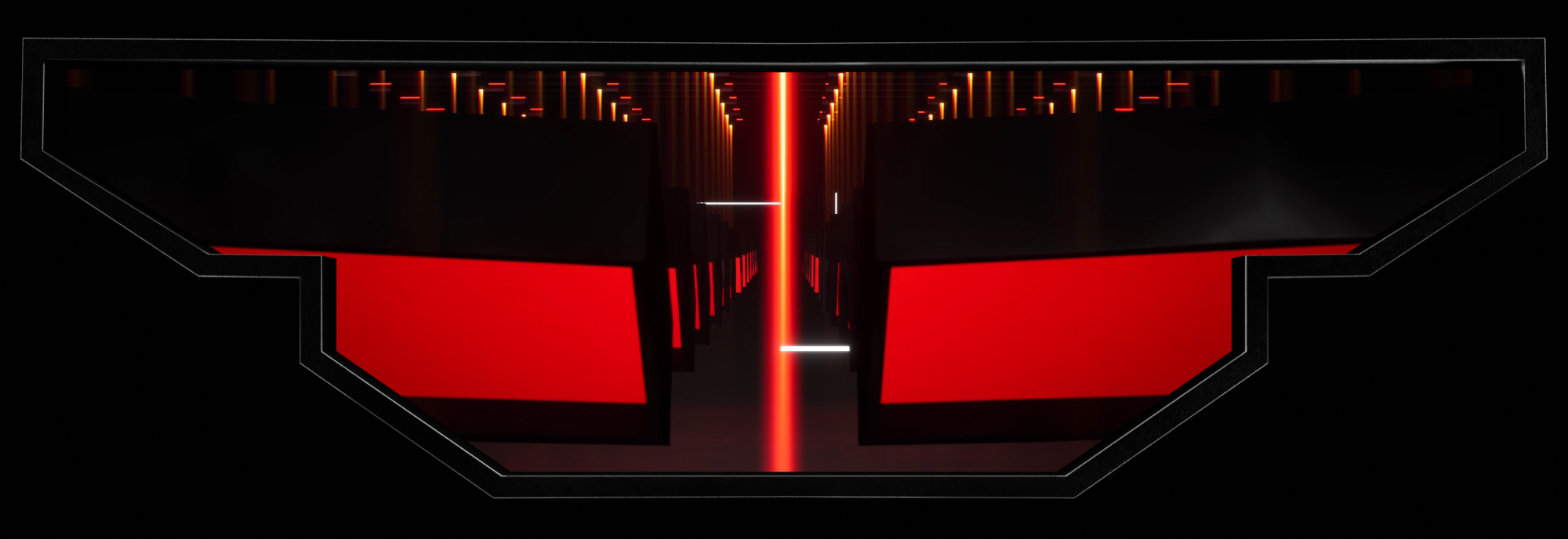

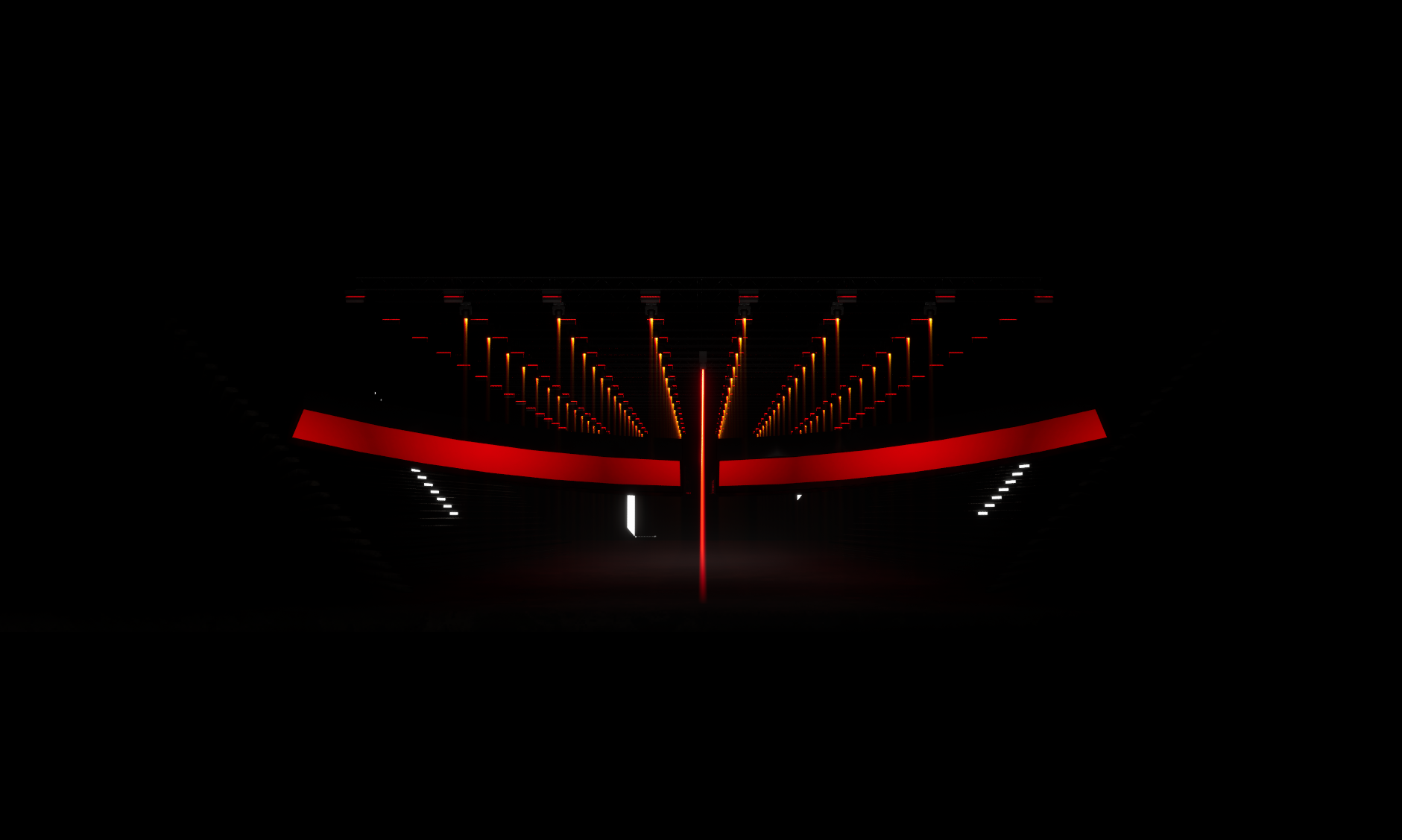

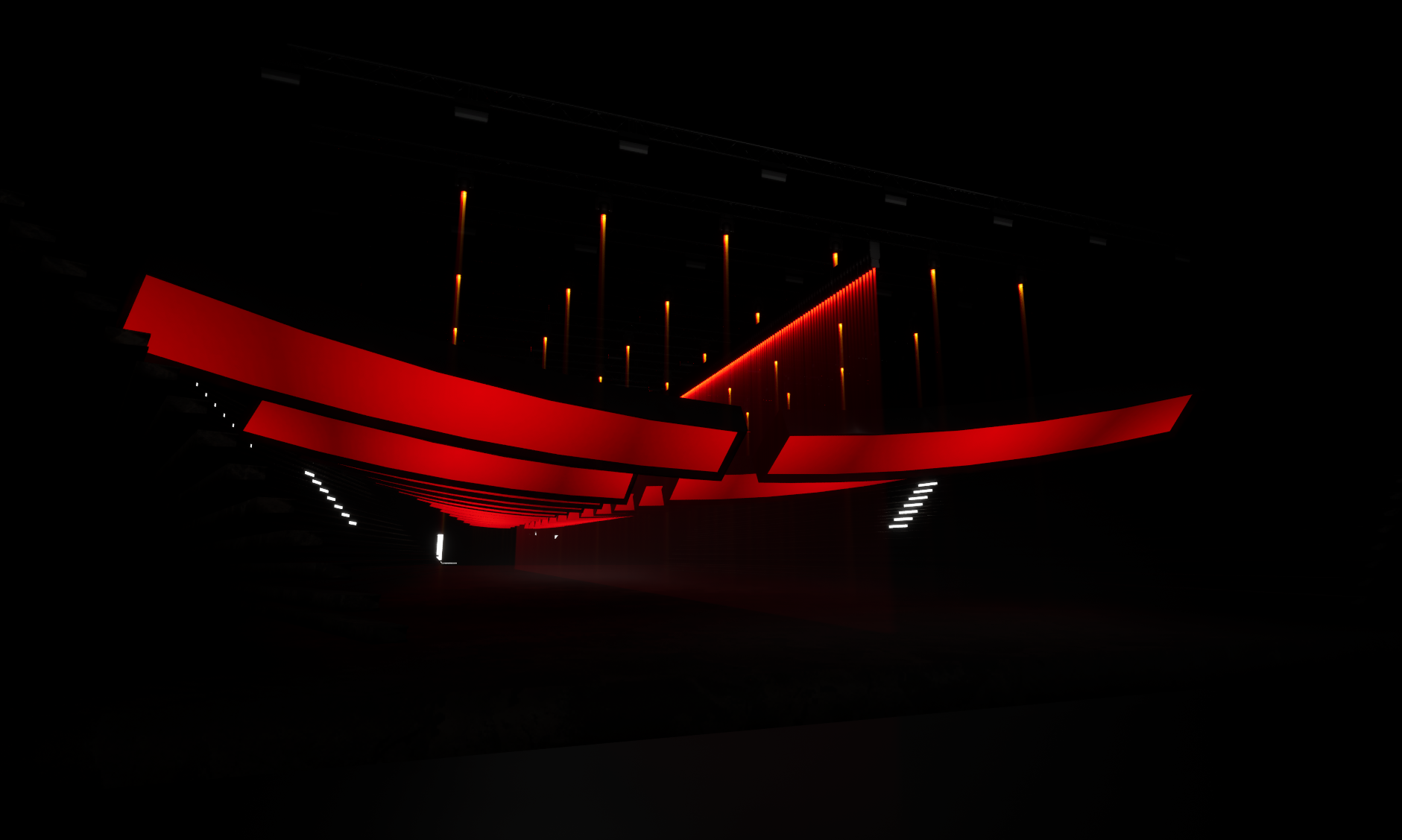

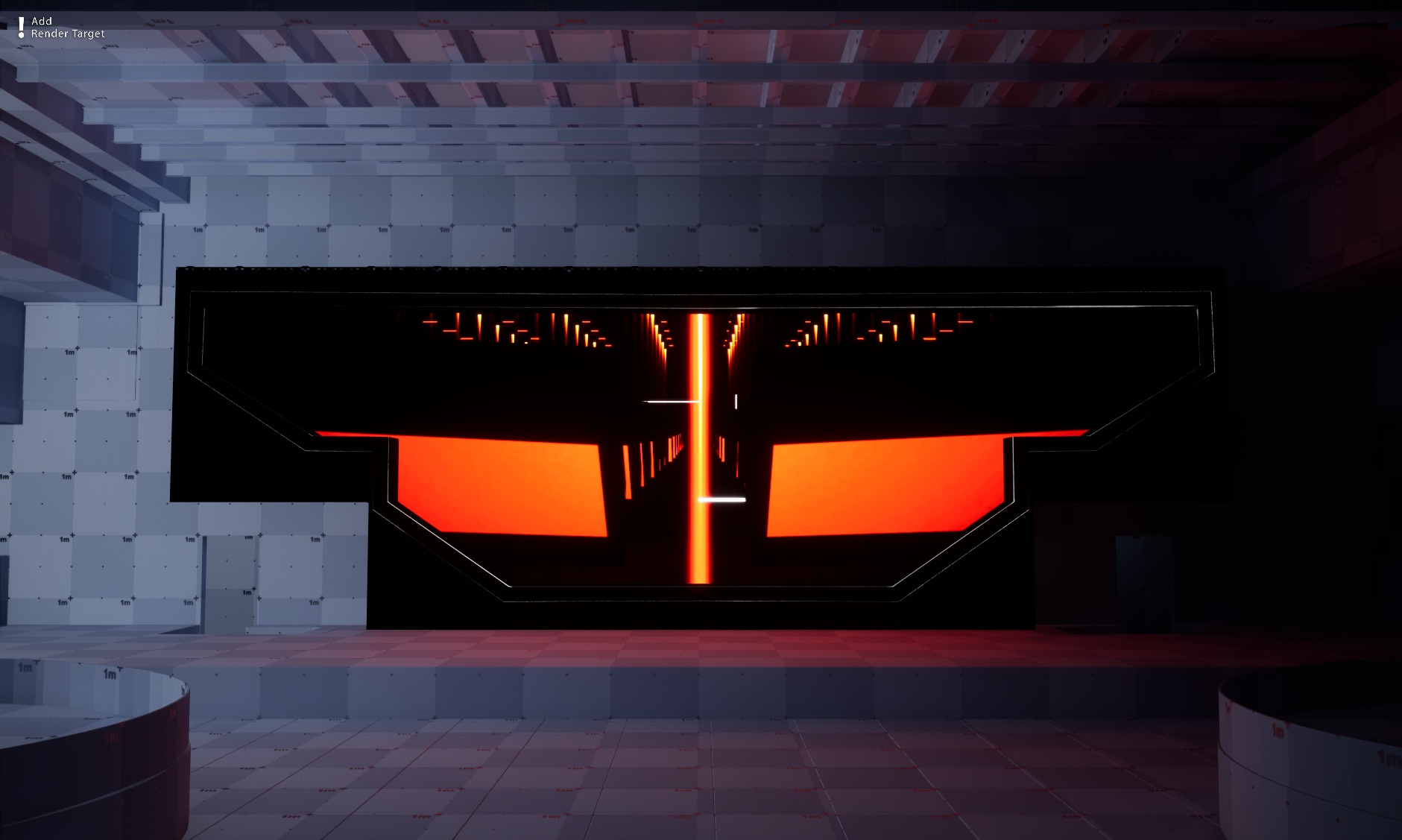

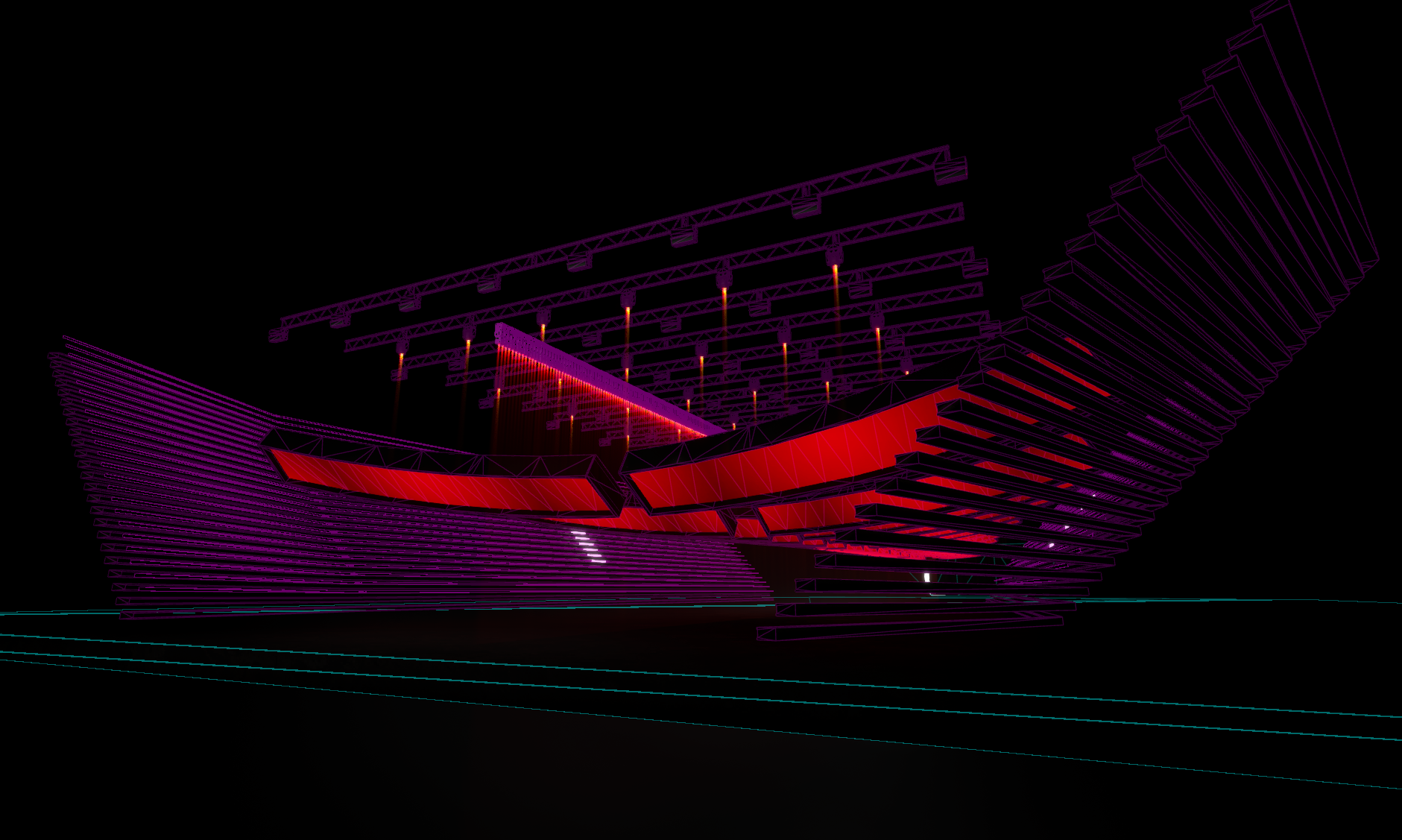

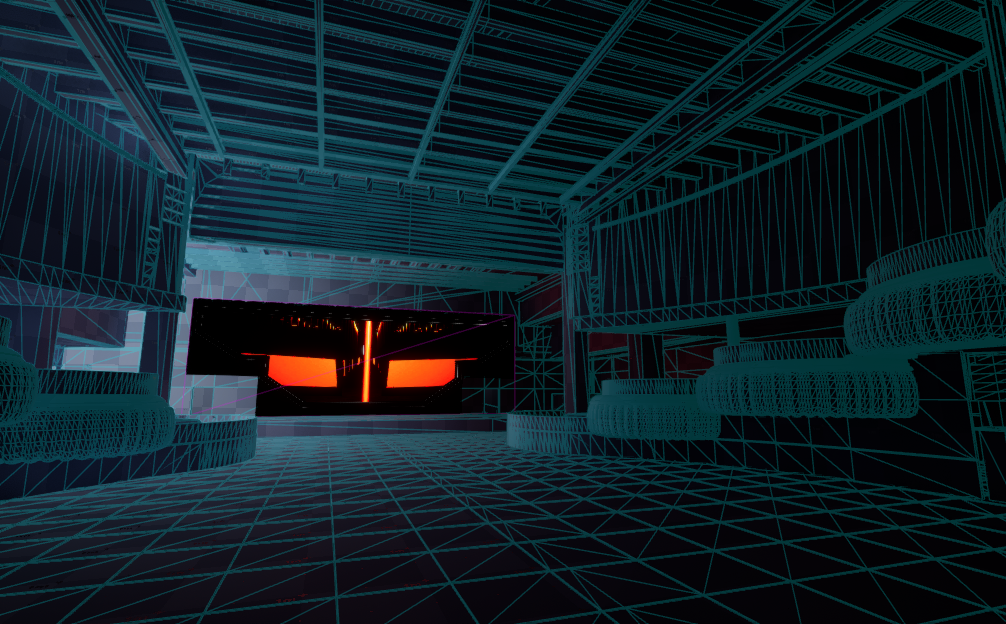

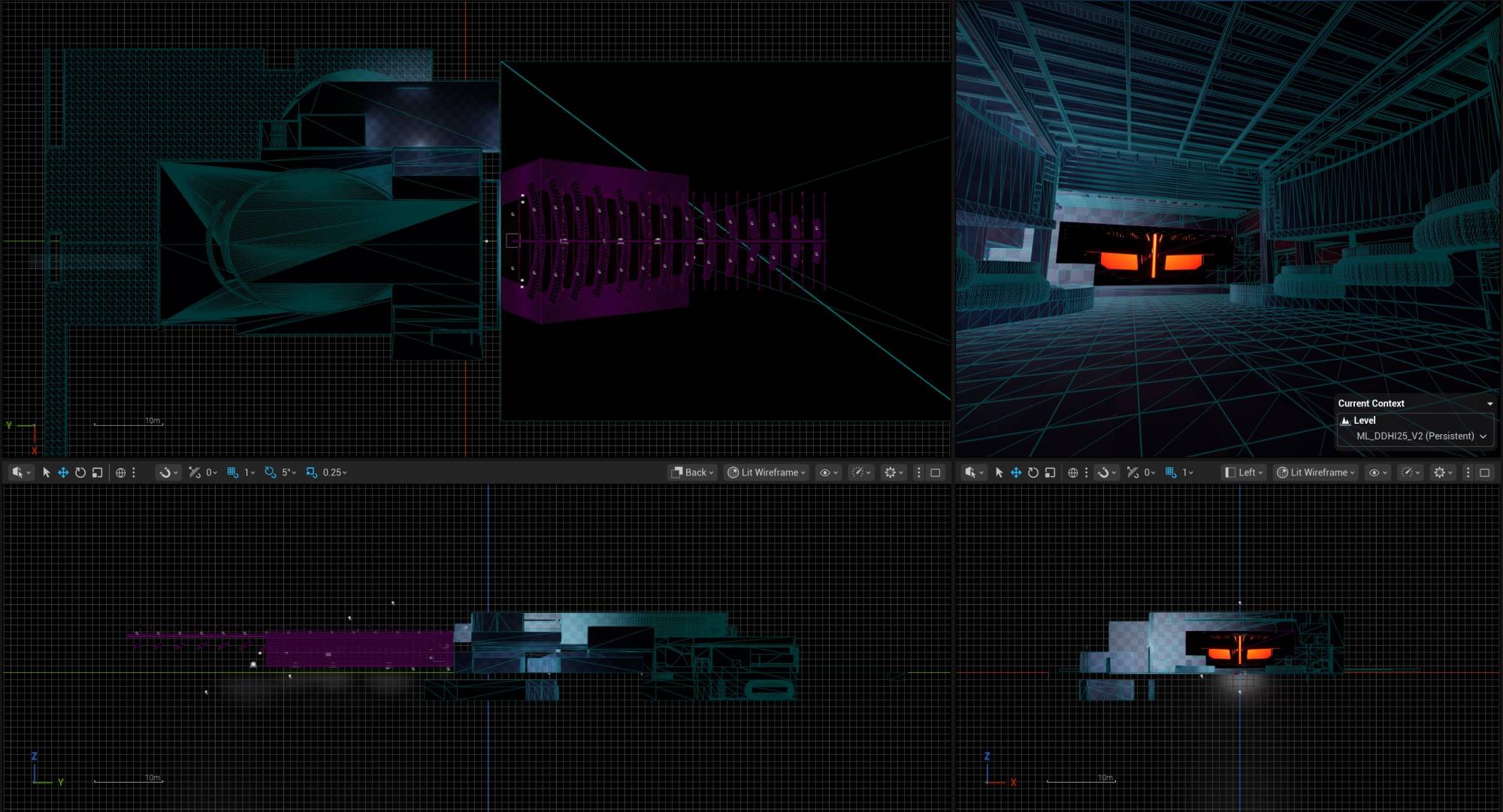

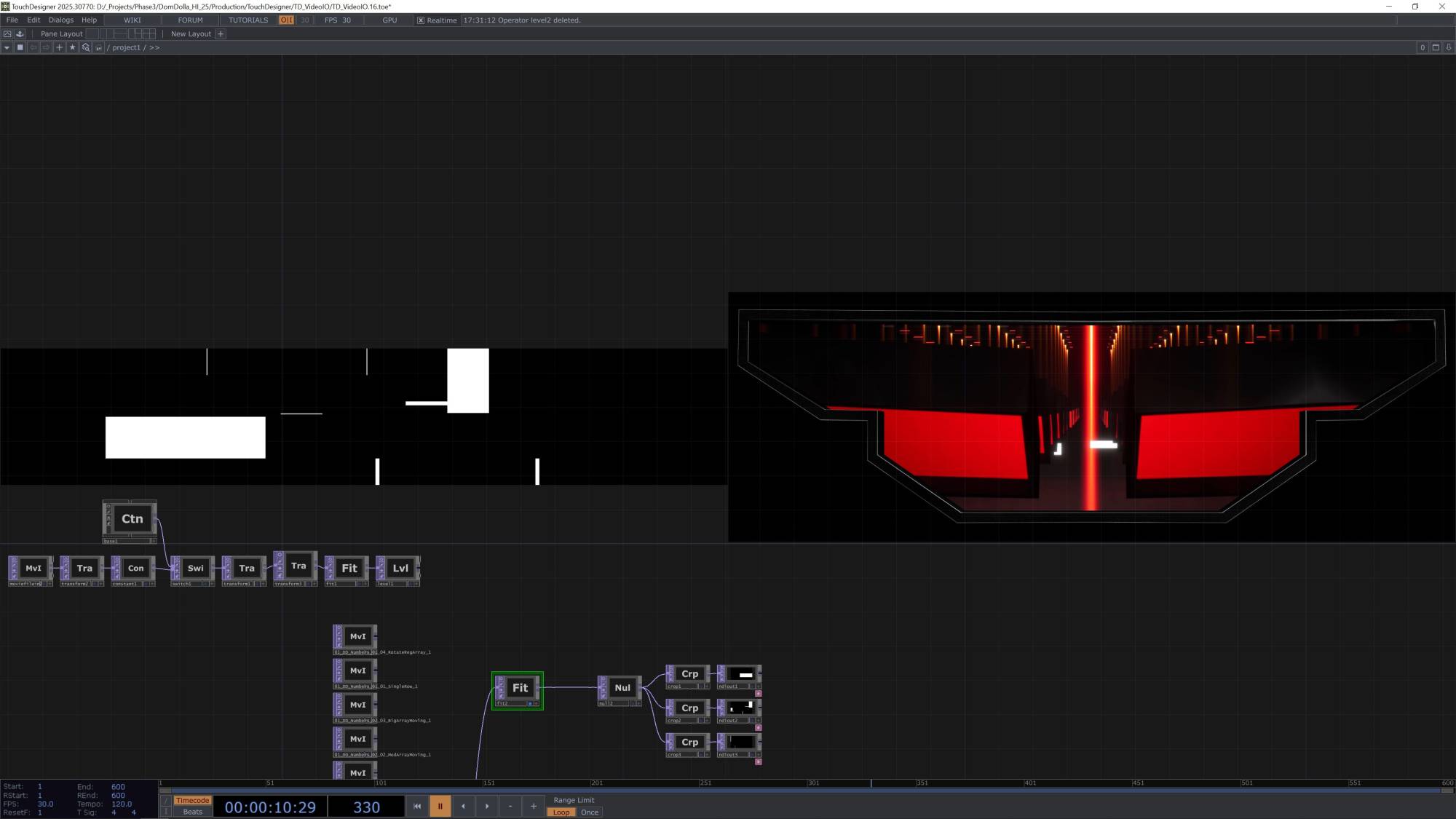

DOM DOLLA Hï IBIZA 2025

For Dom Dolla’s Wednesday residency at Hï Ibiza, Oliver developed a real-time set extension in Unreal Engine, integrated directly into the lighting workflow. Collaborating with production designer David Fairless and Phase 3 Concepts, he built a virtual architecture that expands the Theatre beyond its physical boundaries, with DMX-controlled world elements and virtual fixtures programmed like part of the physical rig. Oliver also engineered a compact, flight-safe hardware system to make the design tour-ready, allowing the illusion to be deployed quickly and reliably in any venue.

Oliver worked closely with David Fairless to design and deliver a real-time rendering pipeline that extended the visual depth of the Theatre at key moments in the show. The extension was built as a fully lit Unreal world, with virtual fixtures and architectural elements programmed directly from the lighting desk. This meant the LD could treat the virtual set as if it were part of the physical rig, with look changes, colour shifts, and effects running in sync with the show’s cues.

The system was optimised for live performance, running a lean Unreal build designed for reliability and low latency. Control data was bridged directly from the console into Unreal, ensuring the physical and virtual rigs stayed locked together. When triggered, the extension transformed the space - the Theatre appeared to open out beyond its walls, creating an illusion of impossible depth and scale.

To make the setup practical for touring, Oliver specified a compact hardware and workflow solution that Fairless’ team could travel with as carry-on. High-performance workstations, a repeatable showfile structure, and streamlined FOH integration meant the system could be deployed quickly in any venue. Once connected, the virtual set slotted into the lighting workflow like another fixture group - an invisible trick until the moment it was revealed to the audience.

Tech: TouchDesigner, Unreal Engine, Resolume, GrandMA3, Vectorworks

Credits

- Raltime Content Development | Systems Design | Software Engineer: Oliver Ellmers

- Project Lead | Lighting Designer: David Fairless

- Production Design | Content Direction: PHASE[3]

Oliver worked closely with David Fairless to design and deliver a real-time rendering pipeline that extended the visual depth of the Theatre at key moments in the show. The extension was built as a fully lit Unreal world, with virtual fixtures and architectural elements programmed directly from the lighting desk. This meant the LD could treat the virtual set as if it were part of the physical rig, with look changes, colour shifts, and effects running in sync with the show’s cues.

The system was optimised for live performance, running a lean Unreal build designed for reliability and low latency. Control data was bridged directly from the console into Unreal, ensuring the physical and virtual rigs stayed locked together. When triggered, the extension transformed the space - the Theatre appeared to open out beyond its walls, creating an illusion of impossible depth and scale.

To make the setup practical for touring, Oliver specified a compact hardware and workflow solution that Fairless’ team could travel with as carry-on. High-performance workstations, a repeatable showfile structure, and streamlined FOH integration meant the system could be deployed quickly in any venue. Once connected, the virtual set slotted into the lighting workflow like another fixture group - an invisible trick until the moment it was revealed to the audience.

Tech: TouchDesigner, Unreal Engine, Resolume, GrandMA3, Vectorworks

Credits

- Raltime Content Development | Systems Design | Software Engineer: Oliver Ellmers

- Project Lead | Lighting Designer: David Fairless

- Production Design | Content Direction: PHASE[3]

Those About To Die

Those About to Die, directed by Roland Emmerich and starring Anthony Hopkins, featured Oliver as Content Supervisor and Lead TouchDesigner Developer/Operator. He developed a custom TouchDesigner virtual production system to drive a large-scale LED volume, synchronizing multiple servers to deliver ultra high-resolution cylindrical projections. The system incorporated real-time synchronization, live color grading, camera tracking, and modular workflows, pushing the boundaries of large-format LED content delivery for cinematic virtual production.

In early 2023, after joining Dimension Studio | DNEG 360 as Integrations Technical Director, Oliver took on the roles of Content Supervisor and Lead TouchDesigner Developer/Operator for Those About to Die, directed by Roland Emmerich and filmed at Cinecittà Studios, starring Anthony Hopkins.

For the first half of the production, he led the virtual production efforts—designing, developing, and operating a custom TouchDesigner playback system to drive a large-scale LED volume comprising a 16,128 × 2,816 curved wall and a 3,840 × 4,320 ceiling. The system ran across seven custom-built, high-performance servers, each delivering full-resolution 10-bit 16K × 5K cylindrical content at 24fps. Because of the spherical projection and the dynamic stage rotation, all nodes had to remain perfectly synchronized—making video slicing unviable.

To address this, Oliver implemented a genlocked sync architecture using TouchDesigner’s Pro Sync protocol, locked to a Stype camera tracking system. This ensured accurate parallax and perspective in-camera from any angle, with no tearing or drift.

Maintaining system-wide consistency posed a key technical challenge. To solve it, he developed a TOX-based modular component workflow, where tagged operators were versioned and automatically synced across all nodes via a local Git server. A table-driven control system streamed live parameter updates to all clients, enabling hot-reload of system changes without downtime.

The playback pipeline ran in 16-bit HAP HDR for expanded dynamic range and robust performance. Oliver also integrated a networked OpenColorIO CDL workflow for live in-camera grading, giving the DIT full remote control via OSC from the director’s tent. In addition, he developed a wireless, iPad-controllable light card system for on-set lighting augmentation.

The entire system was built on a modular architecture, allowing scene presets to be saved and recalled based on digislate metadata—supporting rapid reconfiguration and continuity across takes.

This project marked a major advancement in large-format LED content delivery—real-time, multi-node, and proven at cinematic scale.

Oliver discusses this project in more detail in his presentation at the TouchDesigner Event Berlin 2024.

Tech: TouchDesigner

Credits

- Directors: Roland Emmerich & Marco Kreuzpaintner

- Distributors: Peacock | Amazon Prime Video

- Virtual Production: Dimension | DNEG 360

- Integrations TD | Content Supervisor | TouchDesigner Developer/Operator: Oliver Ellmers

In early 2023, after joining Dimension Studio | DNEG 360 as Integrations Technical Director, Oliver took on the roles of Content Supervisor and Lead TouchDesigner Developer/Operator for Those About to Die, directed by Roland Emmerich and filmed at Cinecittà Studios, starring Anthony Hopkins.

For the first half of the production, he led the virtual production efforts—designing, developing, and operating a custom TouchDesigner playback system to drive a large-scale LED volume comprising a 16,128 × 2,816 curved wall and a 3,840 × 4,320 ceiling. The system ran across seven custom-built, high-performance servers, each delivering full-resolution 10-bit 16K × 5K cylindrical content at 24fps. Because of the spherical projection and the dynamic stage rotation, all nodes had to remain perfectly synchronized—making video slicing unviable.

To address this, Oliver implemented a genlocked sync architecture using TouchDesigner’s Pro Sync protocol, locked to a Stype camera tracking system. This ensured accurate parallax and perspective in-camera from any angle, with no tearing or drift.

Maintaining system-wide consistency posed a key technical challenge. To solve it, he developed a TOX-based modular component workflow, where tagged operators were versioned and automatically synced across all nodes via a local Git server. A table-driven control system streamed live parameter updates to all clients, enabling hot-reload of system changes without downtime.

The playback pipeline ran in 16-bit HAP HDR for expanded dynamic range and robust performance. Oliver also integrated a networked OpenColorIO CDL workflow for live in-camera grading, giving the DIT full remote control via OSC from the director’s tent. In addition, he developed a wireless, iPad-controllable light card system for on-set lighting augmentation.

The entire system was built on a modular architecture, allowing scene presets to be saved and recalled based on digislate metadata—supporting rapid reconfiguration and continuity across takes.

This project marked a major advancement in large-format LED content delivery—real-time, multi-node, and proven at cinematic scale.

Oliver discusses this project in more detail in his presentation at the TouchDesigner Event Berlin 2024.

Tech: TouchDesigner

Credits

- Directors: Roland Emmerich & Marco Kreuzpaintner

- Distributors: Peacock | Amazon Prime Video

- Virtual Production: Dimension | DNEG 360

- Integrations TD | Content Supervisor | TouchDesigner Developer/Operator: Oliver Ellmers

Röyksopp Live at HERE at Outernet

As Technical Lead for Dimension LIVE, Oliver developed a real-time system for Röyksopp Live at HERE at Outernet, seamlessly blending physical and virtual stage elements into a unified visual environment. In collaboration with lighting designer David Ross, he extended the lighting rig into a fully interactive 3D set rendered in real time and controlled entirely from the lighting console. The result was a virtually indistinguishable fusion of digital and physical space, pushing the boundaries of contemporary live show design.

For Röyksopp Live at HERE at Outernet, Oliver served as Technical Lead for Dimension LIVE, collaborating closely with Lighting Designer David Ross to deliver a fully interactive virtual stage extension. The system integrated real-time rendered 3D set elements and virtual DMX-controlled fixtures, all seamlessly operated from David’s lighting console—enabling unified control over physical lights, virtual fixtures, and dynamic 3D environments from a single interface.

A high-fidelity digital twin of the HERE venue was developed, complete with accurate rigging and truss data. Into this environment, Oliver integrated digital replicas of the venue’s in-house lighting fixtures, including extension lighting and wall-mounted LED strip lights that visually connect the stage to the broader venue architecture. The exceptional visual quality and realism of the real-time rendering blurred the boundary between the physical and virtual—making the transition between them virtually imperceptible.

This project represents a major advancement in live show design, bridging virtual production techniques with live entertainment in a way unmatched by any other studio to date.

Tech: TouchDesigner, TouchEngine, Unreal Engine, GrandMA, Chamsys

Credits

- Artist: Röyksopp

- Lighting Designer: David Ross

- LIVE VP Integration: Dimension LIVE

- Technical Lead: Oliver Ellmers

For Röyksopp Live at HERE at Outernet, Oliver served as Technical Lead for Dimension LIVE, collaborating closely with Lighting Designer David Ross to deliver a fully interactive virtual stage extension. The system integrated real-time rendered 3D set elements and virtual DMX-controlled fixtures, all seamlessly operated from David’s lighting console—enabling unified control over physical lights, virtual fixtures, and dynamic 3D environments from a single interface.

A high-fidelity digital twin of the HERE venue was developed, complete with accurate rigging and truss data. Into this environment, Oliver integrated digital replicas of the venue’s in-house lighting fixtures, including extension lighting and wall-mounted LED strip lights that visually connect the stage to the broader venue architecture. The exceptional visual quality and realism of the real-time rendering blurred the boundary between the physical and virtual—making the transition between them virtually imperceptible.

This project represents a major advancement in live show design, bridging virtual production techniques with live entertainment in a way unmatched by any other studio to date.

Tech: TouchDesigner, TouchEngine, Unreal Engine, GrandMA, Chamsys

Credits

- Artist: Röyksopp

- Lighting Designer: David Ross

- LIVE VP Integration: Dimension LIVE

- Technical Lead: Oliver Ellmers

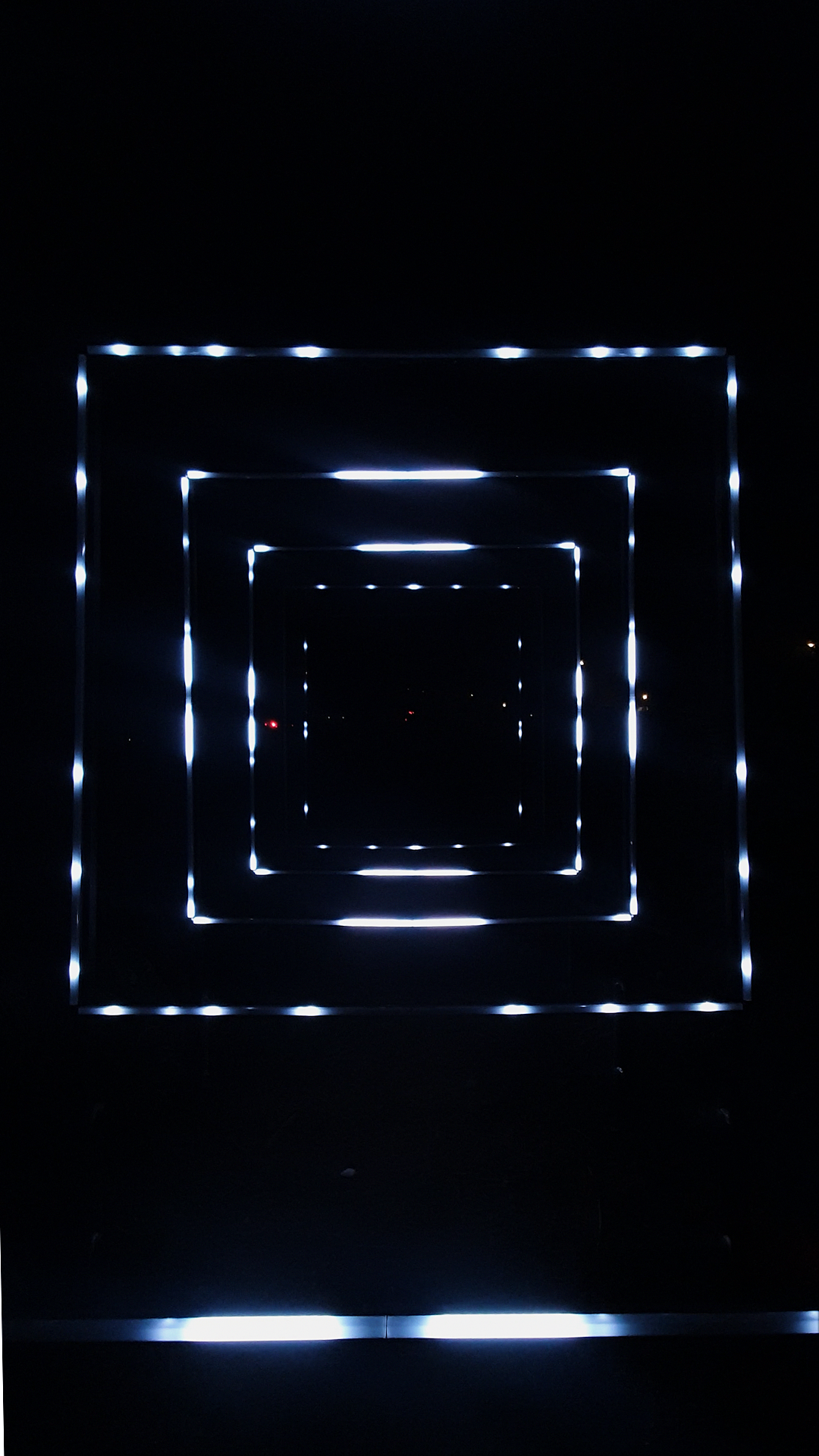

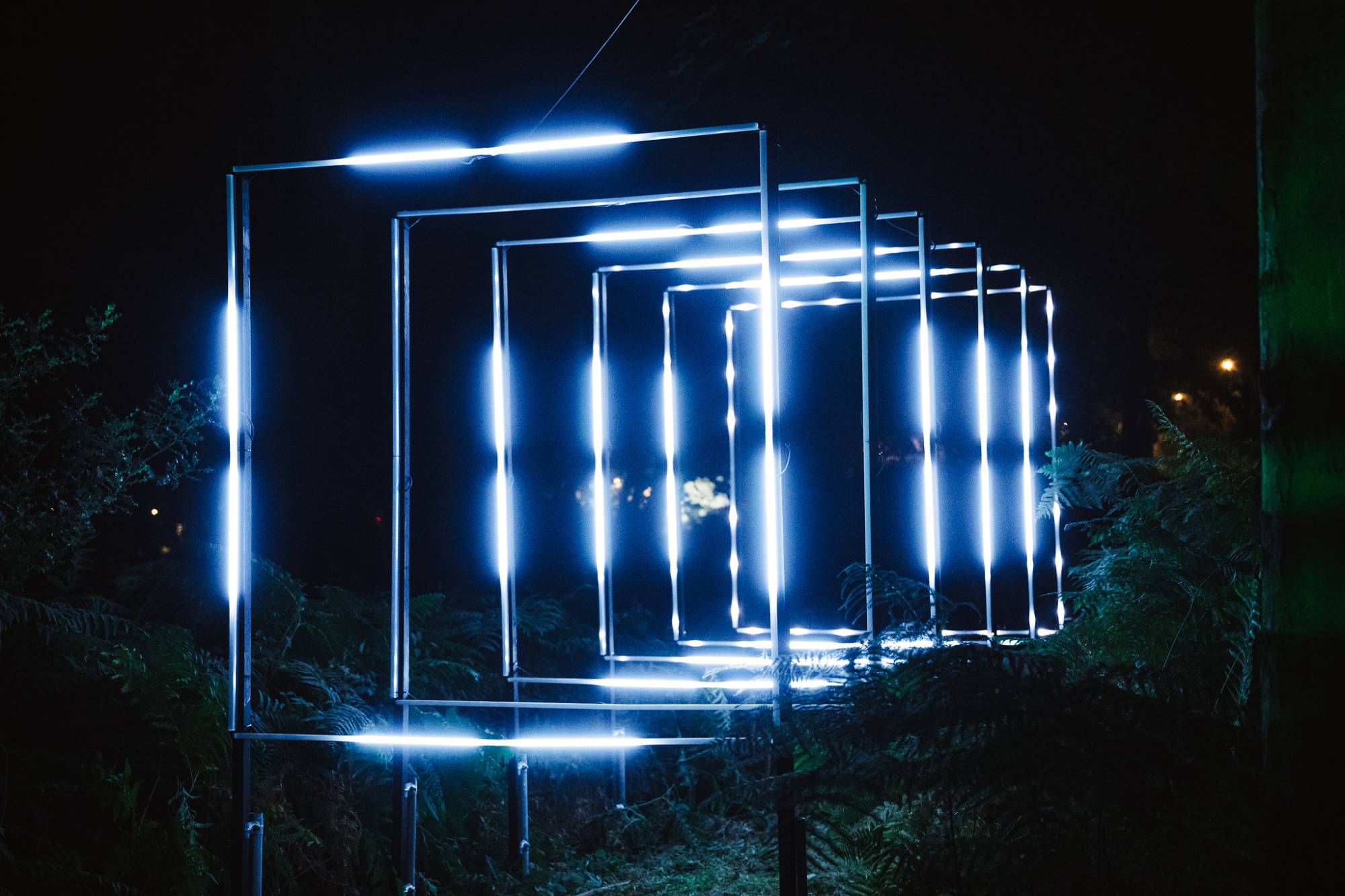

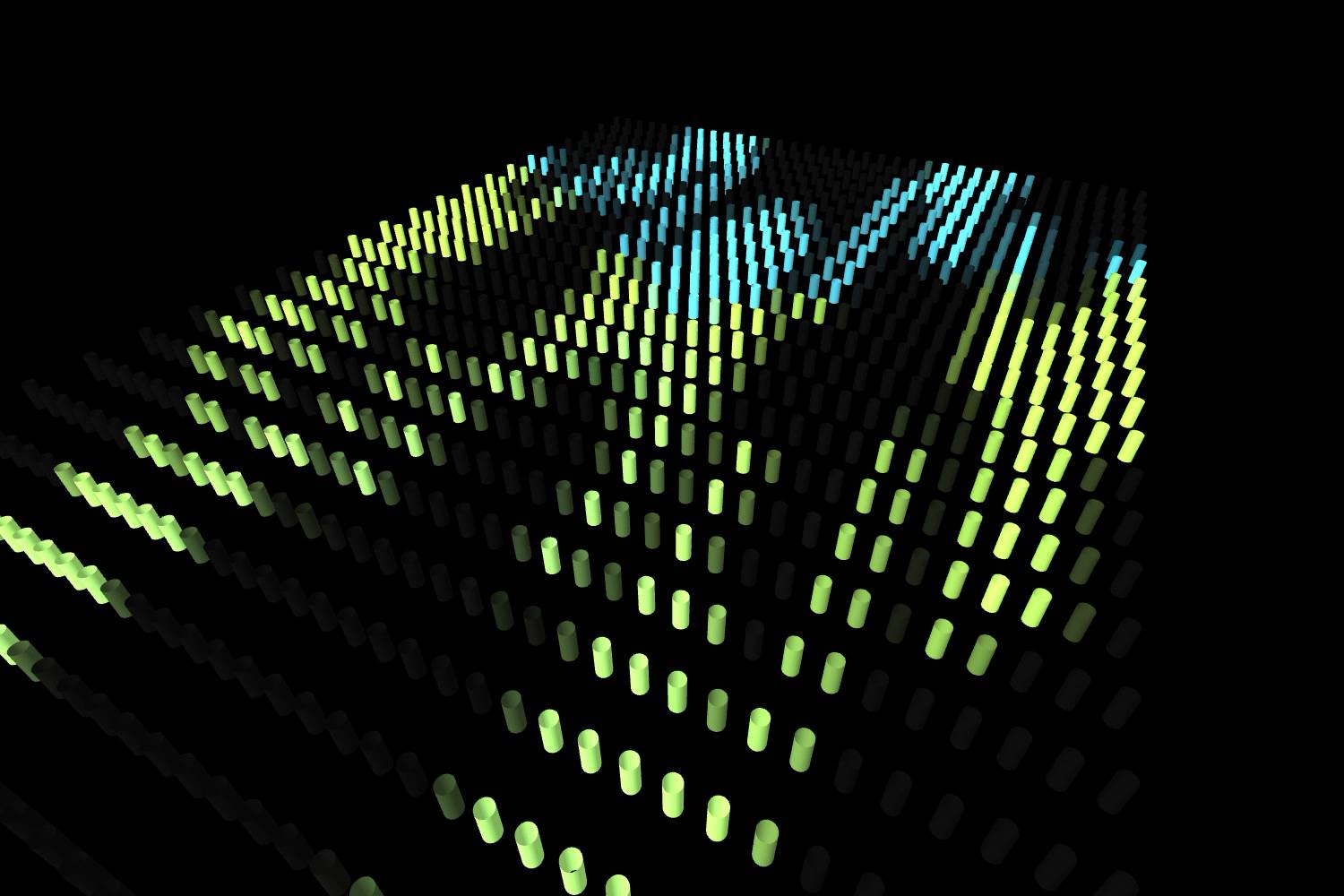

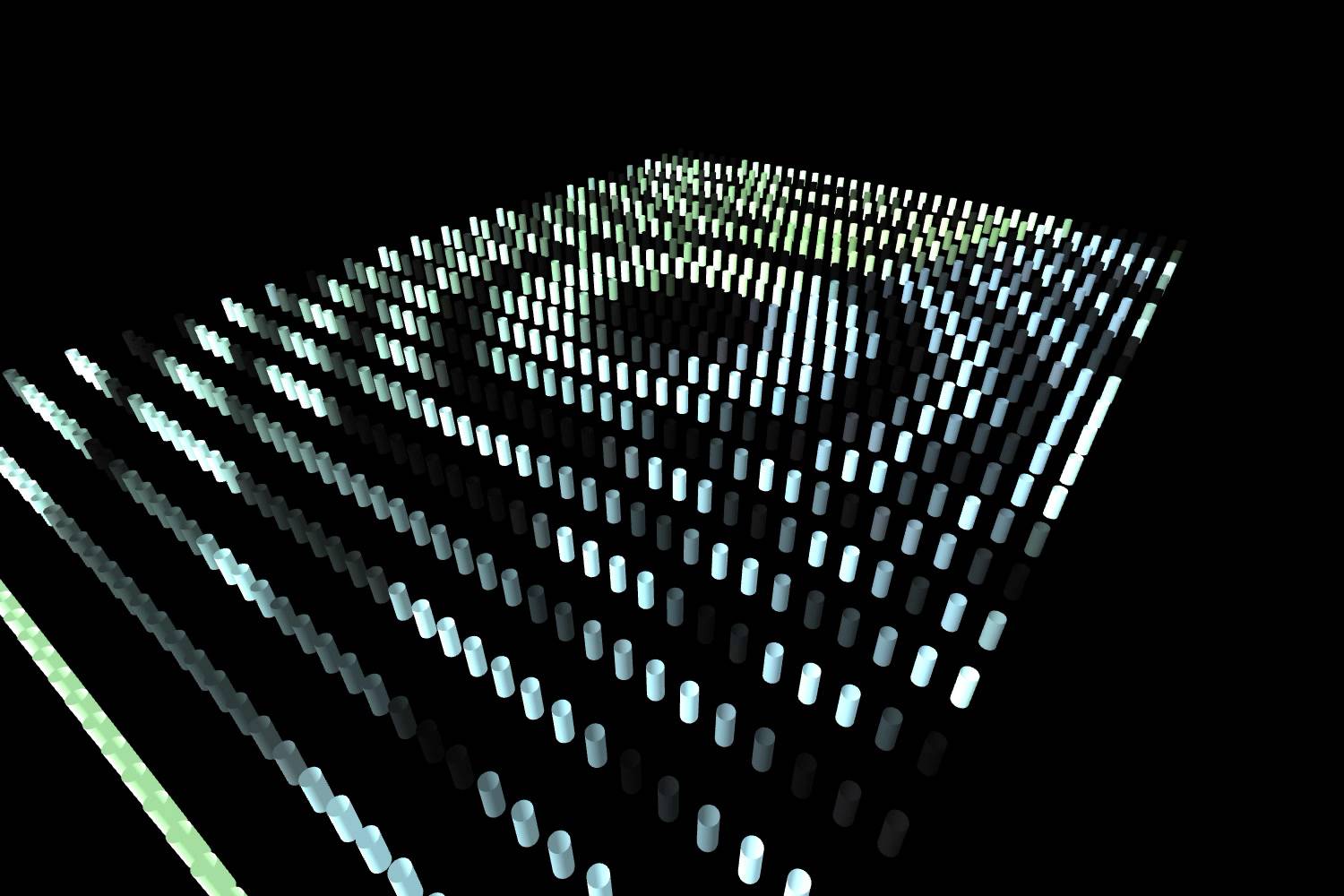

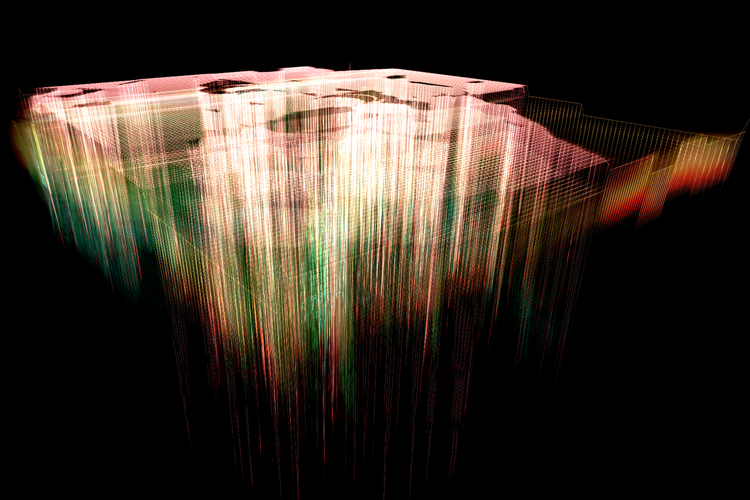

Houghton 24

For Houghton Festival 2024, Oliver collaborated with Oliver Hupfau and curator Craig Richards to develop a generative content system in TouchDesigner, producing a rich library of custom VJ visuals drawn from Craig’s personal archive of video, photography, and artwork.

Together, they also co-created The ABI—a reactive LED installation built using TouchDesigner and Ableton that visualises live audio while simultaneously generating sound in a continuous feedback loop. To support the design and previsualisation process, a digital twin of the sculpture was developed in Unreal Engine.

As part of an ongoing collaboration with Houghton Festival, Oliver worked with Oliver Hupfau for the 2024 edition to develop a custom system for generating VJ content in close collaboration with curator Craig Richards.

The assets were procedurally generated in TouchDesigner using a bespoke pipeline that ingested video, photography, and scanned artworks from Craig’s personal archive. Hupfau brought these elements to life through 2D animation, which were then composited, treated with generative post effects, and rendered into a diverse visual library. The system automated the entire process—producing a rich catalogue of reactive content for VJs to use across multiple stages during the festival.

In addition to the video system, Oliver and Hupfau co-created a bespoke lighting installation titled The ABI—a reactive LED sculpture inspired by satellite sensor arrays. Built in TouchDesigner and Ableton, the installation transformed live audio input into generative visuals while simultaneously composing sound arrangements in real time, creating a continuous audio-visual feedback loop.

The ABI visualised high-frequency sound data through advanced LED control and real-time data processing techniques. To support design and previsualisation, a high-fidelity digital twin of the installation was developed in Unreal Engine, enabling accurate simulation of lighting behaviour and spatial interaction ahead of on-site deployment.

Tech: TouchDesigner, Ableton, Unreal Engine

Credits

- Technical Director | Developer/Programmer: Oliver Ellmers

- Animation | Sound Design: Oliver Hupfau

- Creative Collaborator: Craig Richards

- Light Installation: Lighthaus Studio

As part of an ongoing collaboration with Houghton Festival, Oliver worked with Oliver Hupfau for the 2024 edition to develop a custom system for generating VJ content in close collaboration with curator Craig Richards.

The assets were procedurally generated in TouchDesigner using a bespoke pipeline that ingested video, photography, and scanned artworks from Craig’s personal archive. Hupfau brought these elements to life through 2D animation, which were then composited, treated with generative post effects, and rendered into a diverse visual library. The system automated the entire process—producing a rich catalogue of reactive content for VJs to use across multiple stages during the festival.

In addition to the video system, Oliver and Hupfau co-created a bespoke lighting installation titled The ABI—a reactive LED sculpture inspired by satellite sensor arrays. Built in TouchDesigner and Ableton, the installation transformed live audio input into generative visuals while simultaneously composing sound arrangements in real time, creating a continuous audio-visual feedback loop.

The ABI visualised high-frequency sound data through advanced LED control and real-time data processing techniques. To support design and previsualisation, a high-fidelity digital twin of the installation was developed in Unreal Engine, enabling accurate simulation of lighting behaviour and spatial interaction ahead of on-site deployment.

Tech: TouchDesigner, Ableton, Unreal Engine

Credits

- Technical Director | Developer/Programmer: Oliver Ellmers

- Animation | Sound Design: Oliver Hupfau

- Creative Collaborator: Craig Richards

- Light Installation: Lighthaus Studio

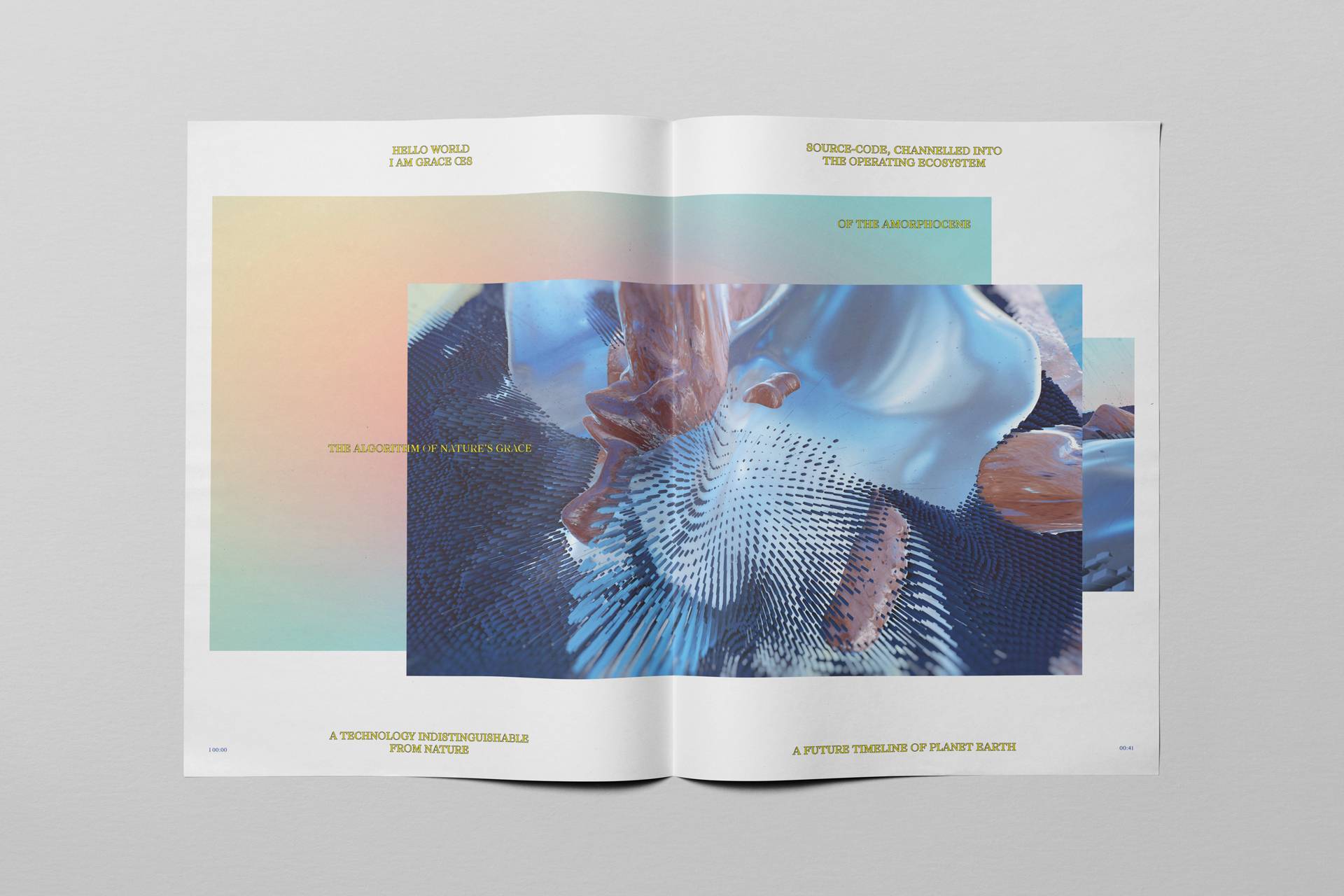

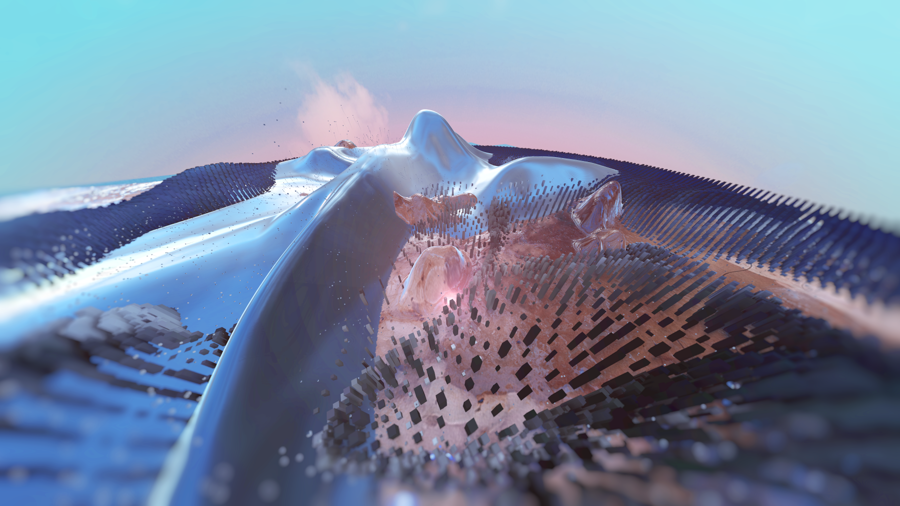

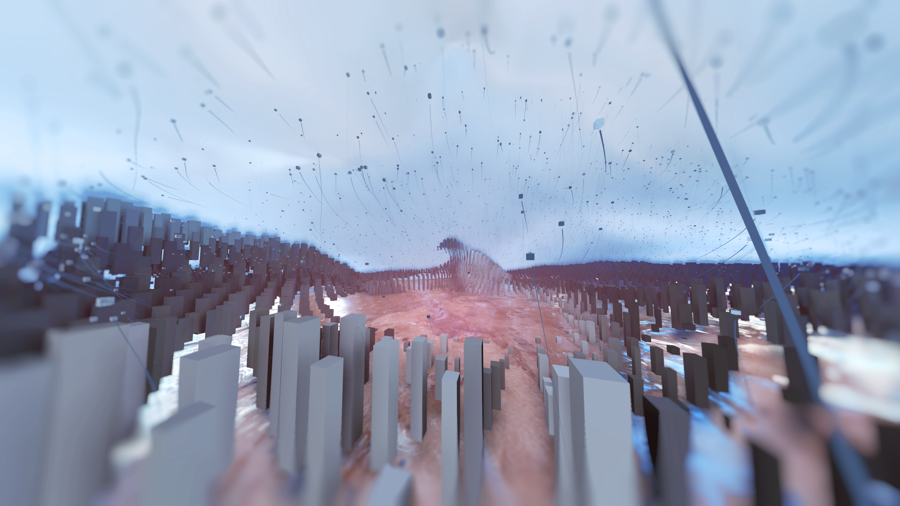

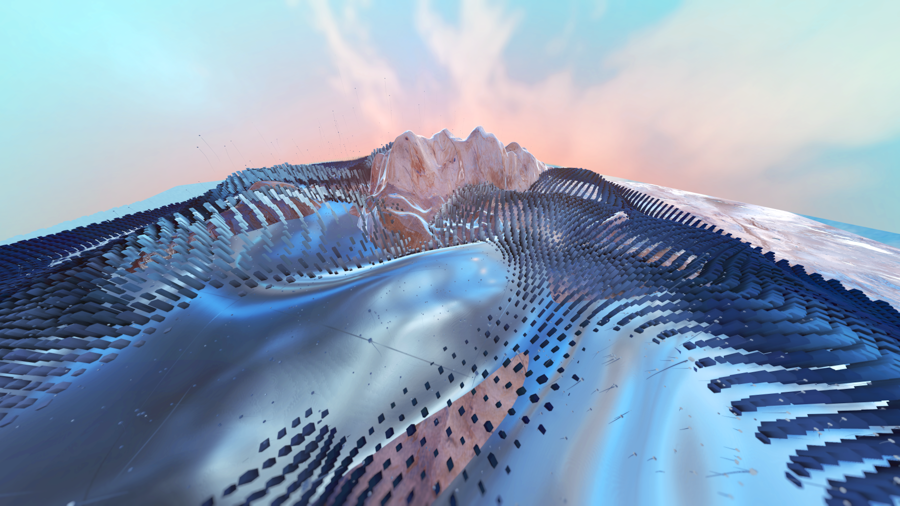

GRACE ŒS

GRACE ŒS (BETA) is an immersive, audio-visual installation, enveloping visitors in a future world, the AMORPHOCENE.

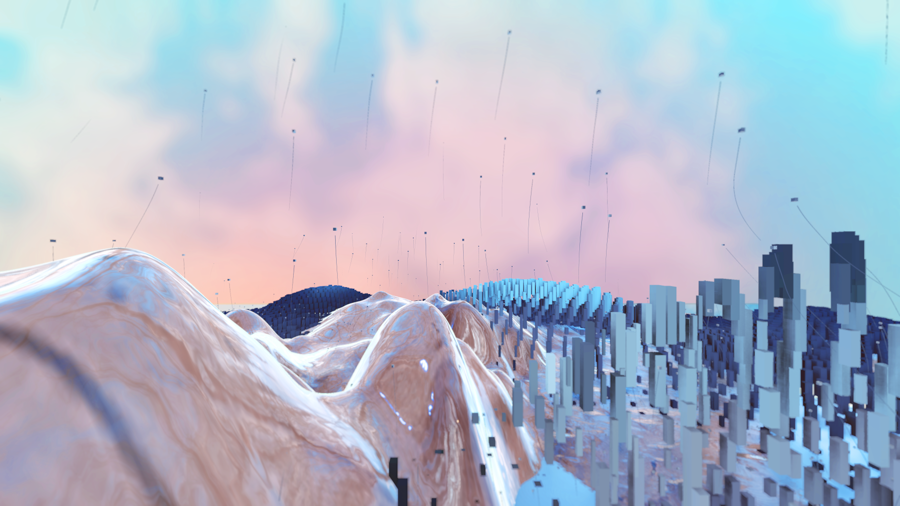

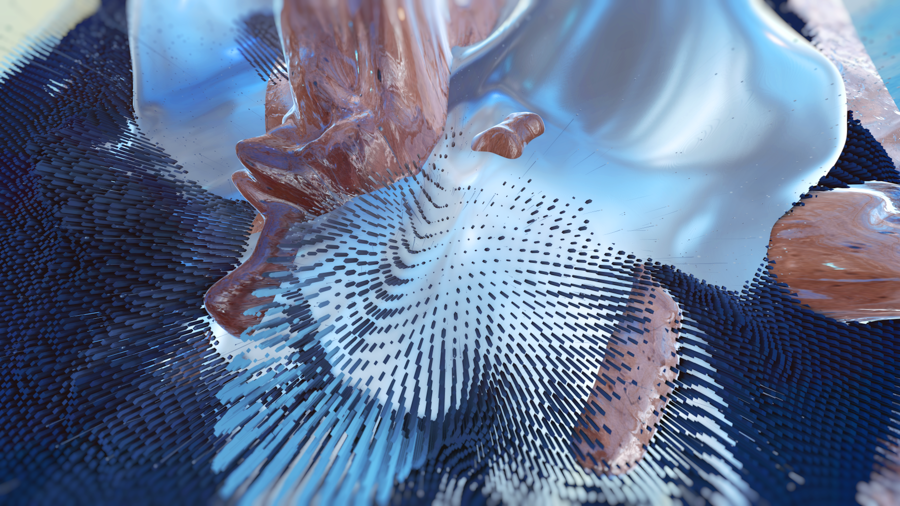

GRACE ŒS (BETA) is an immersive audio-visual installation that transports visitors into a speculative future world—the AMORPHOCENE. Set in a post-Anthropocentric era, this imagined future envisions humans as shapeshifters who have merged with the more-than-human world, creating technologies that are indistinguishable from nature itself.

The installation was exhibited until 6 March 2022 at Buitenplaats Doornburgh in Maarssen, the Netherlands, as part of the Robots in Captivity exhibition—presented alongside works by other artists and designers exploring the evolving relationship between humans and machines.

Through a combination of immersive sound design and procedural 3D imagery, the piece places audiences directly within this imagined future. The experience intentionally disrupts familiar perceptions and comfort zones, challenging visitors with its disorienting and otherworldly environment. By immersing them in a surreal and hopeful vision of coexistence with nature, the work opens a space for transformative reflection—shifting perspectives from anxiety about the future toward a sense of deep interconnection with the natural world.

Tech: Notch, TouchDesigner

Credits

- Artist / Founder: Lisanne Buik

- Assistant Researcher | Textile Artist : Bela Rofe

- Graphic Design | Art Direction: Tom Schwaiger

- Technical Direction | 3D Artist: Oliver Ellmers

- Sound Design: Harmen Sipkema Ard Janssen

- Voice Artist: Anyah Sealey

GRACE ŒS (BETA) is an immersive audio-visual installation that transports visitors into a speculative future world—the AMORPHOCENE. Set in a post-Anthropocentric era, this imagined future envisions humans as shapeshifters who have merged with the more-than-human world, creating technologies that are indistinguishable from nature itself.

The installation was exhibited until 6 March 2022 at Buitenplaats Doornburgh in Maarssen, the Netherlands, as part of the Robots in Captivity exhibition—presented alongside works by other artists and designers exploring the evolving relationship between humans and machines.

Through a combination of immersive sound design and procedural 3D imagery, the piece places audiences directly within this imagined future. The experience intentionally disrupts familiar perceptions and comfort zones, challenging visitors with its disorienting and otherworldly environment. By immersing them in a surreal and hopeful vision of coexistence with nature, the work opens a space for transformative reflection—shifting perspectives from anxiety about the future toward a sense of deep interconnection with the natural world.

Tech: Notch, TouchDesigner

Credits

- Artist / Founder: Lisanne Buik

- Assistant Researcher | Textile Artist : Bela Rofe

- Graphic Design | Art Direction: Tom Schwaiger

- Technical Direction | 3D Artist: Oliver Ellmers

- Sound Design: Harmen Sipkema Ard Janssen

- Voice Artist: Anyah Sealey

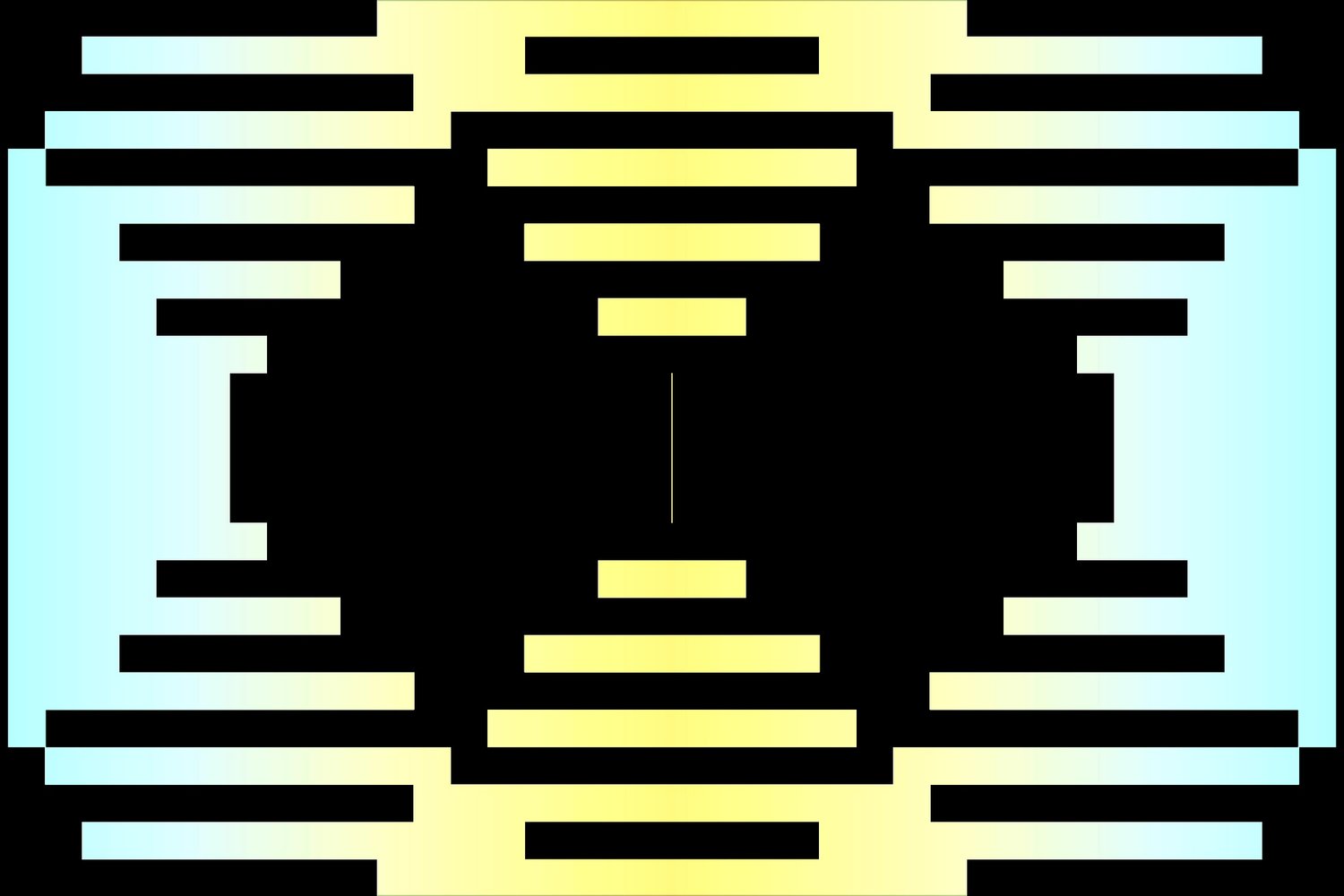

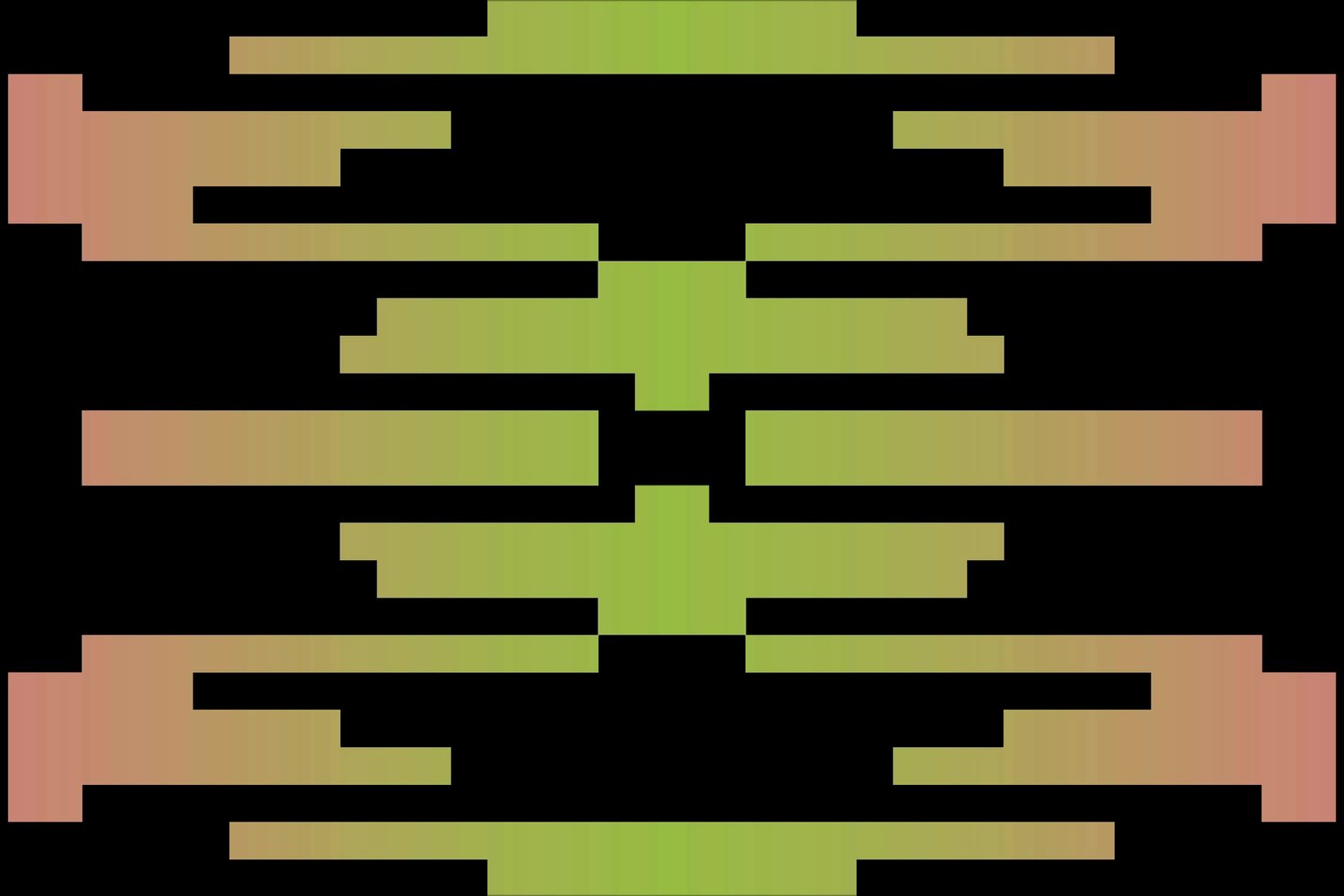

Human Movement

Album artwork, press & social content, and live tour graphics for Sydney-based house producer Human Movement's recent single releases Thermal, Its The Movement, Elevate, Waiting, and long-awaited Kinetic [EP].

Album artwork, press & social content, and live tour graphics for Sydney-based house producer Human Movement's recent single releases Thermal, Its The Movement, Elevate, Waiting, and long-awaited Kinetic [EP].

Tech: Notch, TouchDesigner

Credits

- 3D Art + Motion Design: Oliver Ellmers

- Graphic Design + Art Direction: Thomas Schwaiger

Album artwork, press & social content, and live tour graphics for Sydney-based house producer Human Movement's recent single releases Thermal, Its The Movement, Elevate, Waiting, and long-awaited Kinetic [EP].

Tech: Notch, TouchDesigner

Credits

- 3D Art + Motion Design: Oliver Ellmers

- Graphic Design + Art Direction: Thomas Schwaiger

adidas X Foot Locker : CYPHER FROM THE FUTURE

As part of adidas X Foot Locker 2022 campaign EV3R_CHANGIN_VIS10NS for the NMD_V3 trainer, GoodMeasure collaborated with The Midnight Club to create an interactive WebXR experience leveraging volumetric video and augmented reality - CYPHER FROM THE FUTURE

As part of adidas' X Foot Locker 2022 campaign EV3R_CHANGIN_VIS10NS for the NMD_V3 trainer, GoodMeasure collaborated with The Midnight Club to create an interactive WebXR experience that combines volumetric video and augmented reality—CYPHER FROM THE FUTURE.

adidas Originals and Foot Locker enlisted three rising European rappers—Nahir from France, Sainté from the UK, and Sacky from Italy—to collaborate on a cypher set in a futuristic cityscape. The musicians perform in a digitally augmented environment, with viewers able to project the cypher around them wherever they tune in. The campaign also features the rappers sporting the new trainers in a digitally distorted version of their home cities, blending fashion and music in a boundary-pushing digital experience.

Tech: Volumetric Capture, Unity 3D, ThreeJS, WebXR

Credits

- Design + Art Direction: The Midnight Club

- Volumetric Capture + Digital Production: GoodMeasure

- Technical Direction + Volumetric Technician: Oliver Ellmers | Pretty Lucky

As part of adidas' X Foot Locker 2022 campaign EV3R_CHANGIN_VIS10NS for the NMD_V3 trainer, GoodMeasure collaborated with The Midnight Club to create an interactive WebXR experience that combines volumetric video and augmented reality—CYPHER FROM THE FUTURE.

adidas Originals and Foot Locker enlisted three rising European rappers—Nahir from France, Sainté from the UK, and Sacky from Italy—to collaborate on a cypher set in a futuristic cityscape. The musicians perform in a digitally augmented environment, with viewers able to project the cypher around them wherever they tune in. The campaign also features the rappers sporting the new trainers in a digitally distorted version of their home cities, blending fashion and music in a boundary-pushing digital experience.

Tech: Volumetric Capture, Unity 3D, ThreeJS, WebXR

Credits

- Design + Art Direction: The Midnight Club

- Volumetric Capture + Digital Production: GoodMeasure

- Technical Direction + Volumetric Technician: Oliver Ellmers | Pretty Lucky

Laurel Halo Laurel Halo

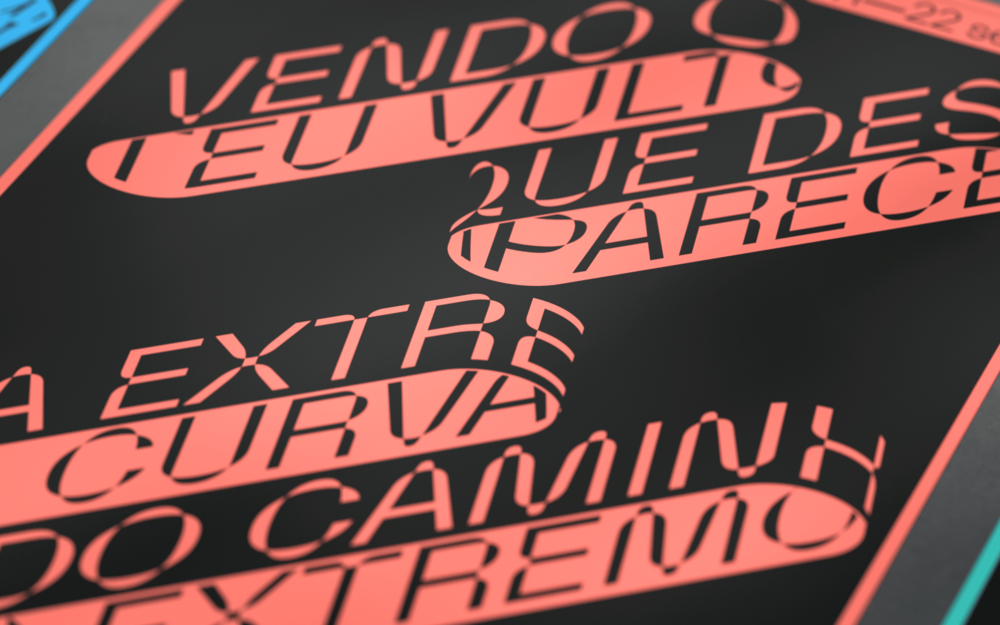

A dynamic poster series for the Laurel Halo Australian Tour 2018, commissioned by Astral People.

A dynamic poster series for the Laurel Halo Australian Tour 2018, commissioned by Astral People.

A dynamic poster series for the Laurel Halo Australian Tour 2018, commissioned by Astral People.

Gaia RA

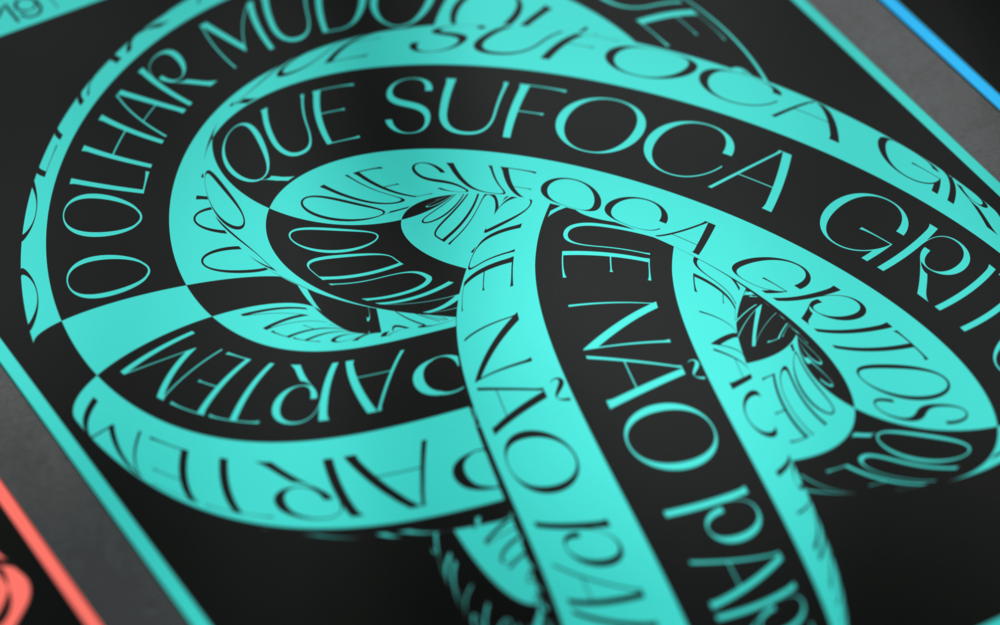

The Gaia International Forum (11-22 September 2019) inaugurates the new Poetry Block . Including the Library and the Municipal Auditorium, this is a space dedicated to culture and words that claims streets and gardens and makes them a permanent stage; It is the place of a program that connects all the localities of the county and an irresistible meeting point to spend the last weekends of this summer with the best that Lusophone culture has to offer.

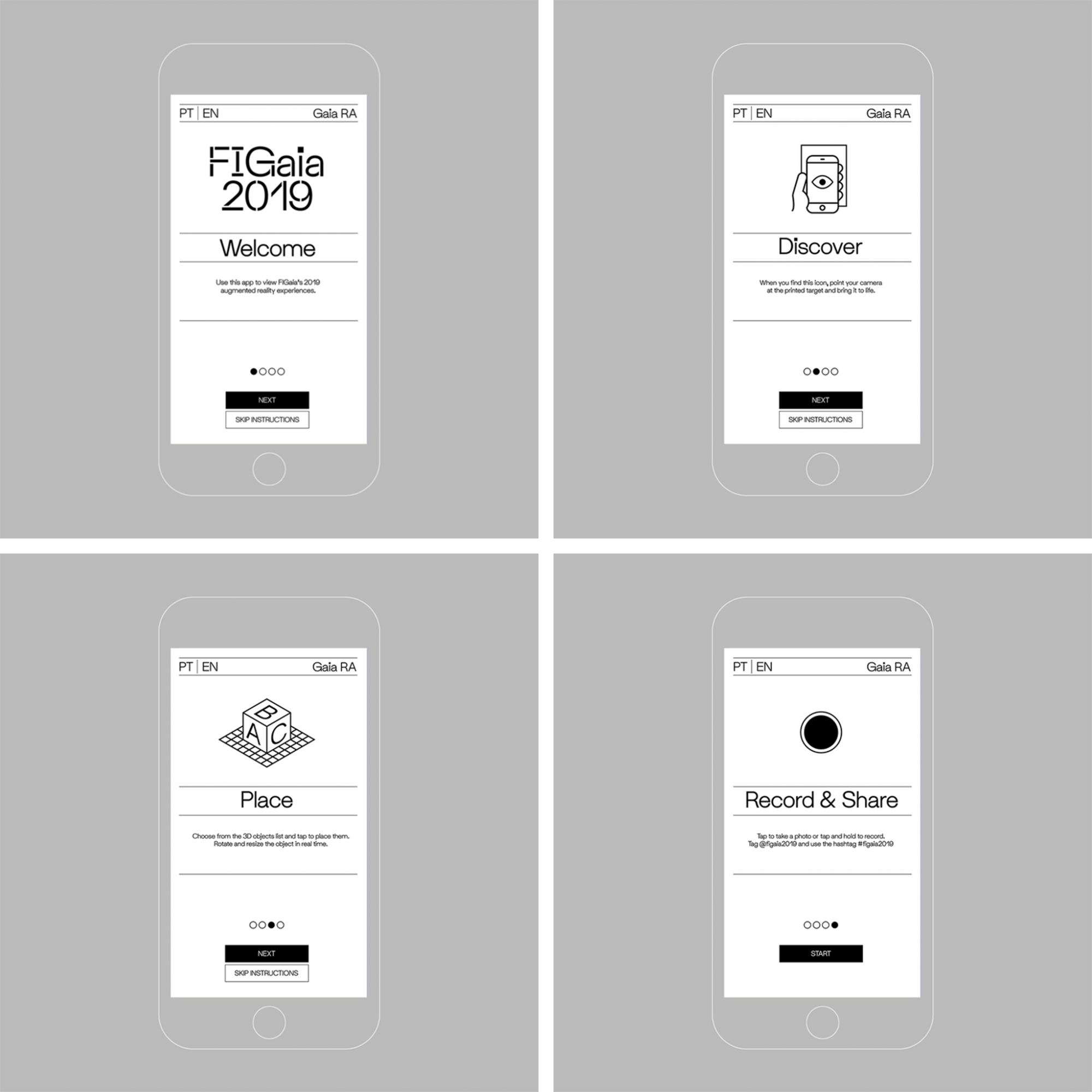

The Gaia International Forum (11-22 September 2019) inaugurated the new Poetry Block, a cultural space dedicated to the power of words, encompassing the Library and Municipal Auditorium. This space transformed streets and gardens into a permanent stage, offering a program that connected all localities of the county and became an irresistible meeting point to enjoy the best of Lusophone culture in the final weekends of summer.

The brief for the project included the design and delivery of branding, identity, print and digital materials, a website, and an interactive Augmented Reality (AR) experience. Through the AR application, users could interact with festival materials—such as billboards, posters, and flyers—decorating the city. The experience allowed users to place 3D interpretations of poems around the city, record their own compositions, and share them on social media.

The AR application became a central feature of the festival, driving audience engagement as users contributed to the festival and explored its other features.

For more information on the festival, visit FIGaia 2019.

The app is still available for download on the App Store and Google Play.

This project was awarded the Type Directors Club Certificate of Typographic Excellence.

More images and information can be viewed here.

Tech: Unity, C#, AR

Credits

- Identity/Graphic Design: Serafim Mendes

- Identity/Graphic Design: Diana Ferreira

- Art Direction: Maria João Ruivo

- Content Design: Serafim Mendes

The Gaia International Forum (11-22 September 2019) inaugurated the new Poetry Block, a cultural space dedicated to the power of words, encompassing the Library and Municipal Auditorium. This space transformed streets and gardens into a permanent stage, offering a program that connected all localities of the county and became an irresistible meeting point to enjoy the best of Lusophone culture in the final weekends of summer.

The brief for the project included the design and delivery of branding, identity, print and digital materials, a website, and an interactive Augmented Reality (AR) experience. Through the AR application, users could interact with festival materials—such as billboards, posters, and flyers—decorating the city. The experience allowed users to place 3D interpretations of poems around the city, record their own compositions, and share them on social media.

The AR application became a central feature of the festival, driving audience engagement as users contributed to the festival and explored its other features.

For more information on the festival, visit FIGaia 2019.

The app is still available for download on the App Store and Google Play.

This project was awarded the Type Directors Club Certificate of Typographic Excellence.

More images and information can be viewed here.

Tech: Unity, C#, AR

Credits

- Identity/Graphic Design: Serafim Mendes

- Identity/Graphic Design: Diana Ferreira

- Art Direction: Maria João Ruivo

- Content Design: Serafim Mendes

HP Omen

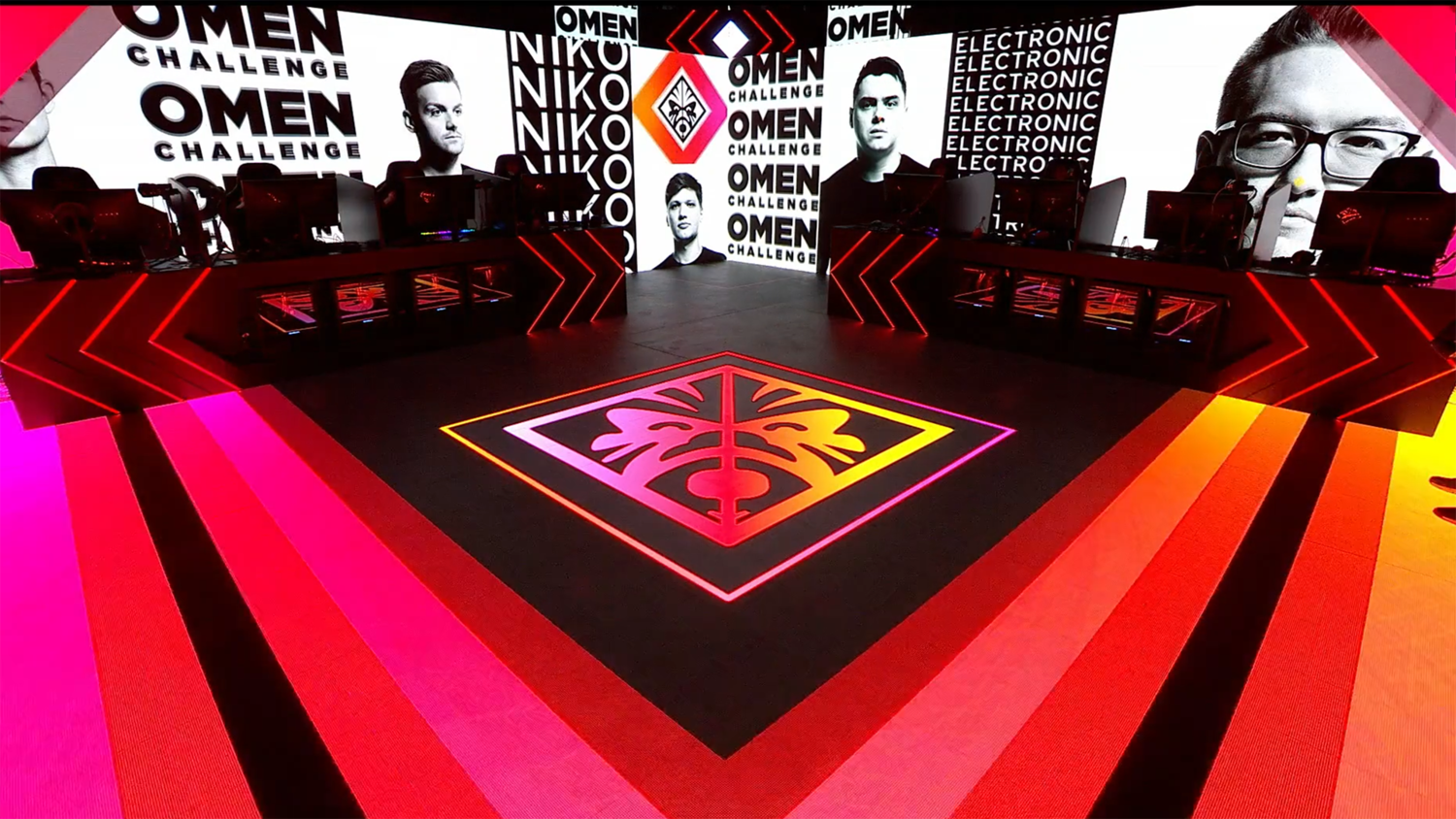

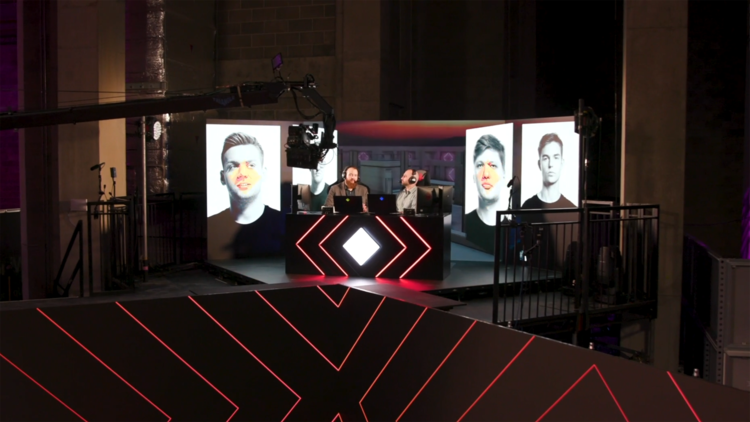

World’s First Live AR, MR, LED & E-Sports live-streamed event for HP Omen.

The world’s first live AR, MR, LED, and e-sports live-streamed event for HP Omen was commissioned by Scott Millar for Pixel Artworks and AKQA.

Oliver was employed as the in-house Interactive Developer at Pixel Artworks, where he contributed to the integration of disguise, TouchDesigner, and Notch pipeline systems, as well as real-time show operation.

The OMEN Challenge, held annually with the support of OMEN by HP, featured eight players competing for a $50,000 prize pool. The tournament included 1-on-1 and four-player deathmatches in Counter-Strike: Global Offensive. The event took e-sports beyond the traditional tournament format, offering a battle arena with immersive stage and content experiences.

The project, led by creative media agency AKQA and realized in collaboration with Scott Millar and Pixel Artworks, involved designing and developing a bespoke pipeline using disguise, Notch, and TouchDesigner. The result was a groundbreaking live broadcast, combining AR, Mixed Reality, and live show elements, all streamed live on Twitch and other platforms.

For a more in-depth technical breakdown, check out the full case study by disguise.

Tech: TouchDesigner, Notch, disguise, Python, XR

Credits

- Commission: Scott Millar

- Production: Pixel Artworks

The world’s first live AR, MR, LED, and e-sports live-streamed event for HP Omen was commissioned by Scott Millar for Pixel Artworks and AKQA.

Oliver was employed as the in-house Interactive Developer at Pixel Artworks, where he contributed to the integration of disguise, TouchDesigner, and Notch pipeline systems, as well as real-time show operation.

The OMEN Challenge, held annually with the support of OMEN by HP, featured eight players competing for a $50,000 prize pool. The tournament included 1-on-1 and four-player deathmatches in Counter-Strike: Global Offensive. The event took e-sports beyond the traditional tournament format, offering a battle arena with immersive stage and content experiences.

The project, led by creative media agency AKQA and realized in collaboration with Scott Millar and Pixel Artworks, involved designing and developing a bespoke pipeline using disguise, Notch, and TouchDesigner. The result was a groundbreaking live broadcast, combining AR, Mixed Reality, and live show elements, all streamed live on Twitch and other platforms.

For a more in-depth technical breakdown, check out the full case study by disguise.

Tech: TouchDesigner, Notch, disguise, Python, XR

Credits

- Commission: Scott Millar

- Production: Pixel Artworks

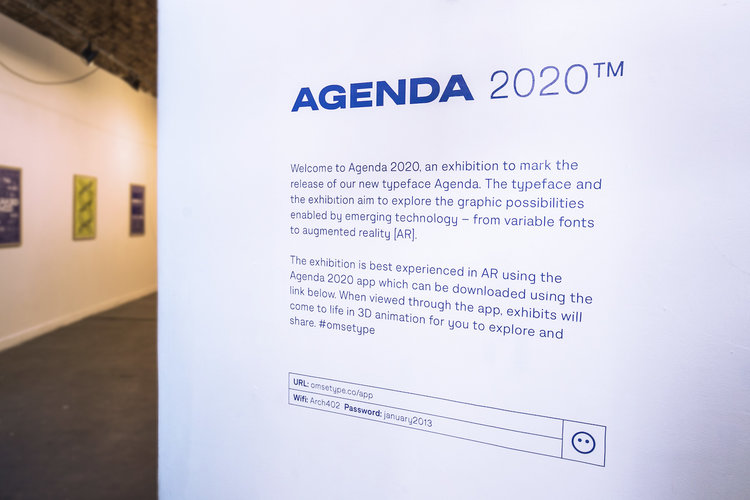

Agenda 2020

Agenda 2020 explores the graphical possibilities enabled by emerging technologies such as variable fonts and augmented reality.

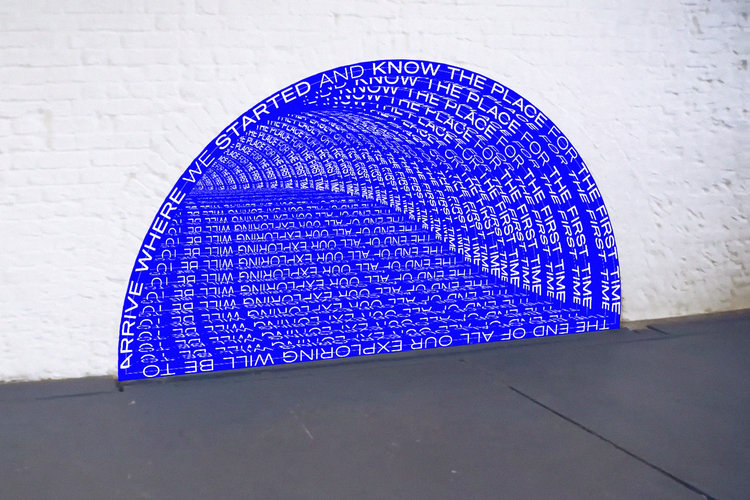

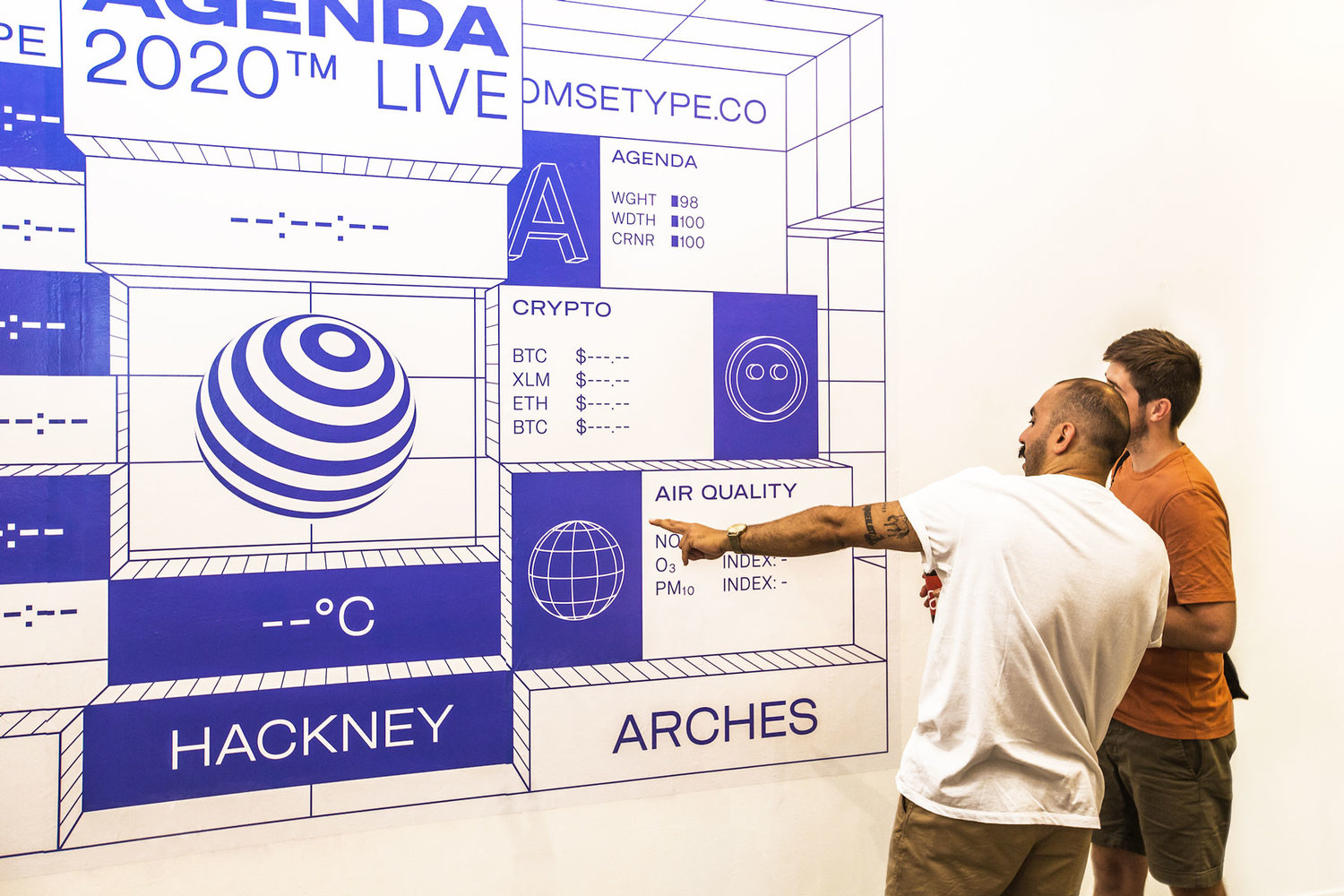

Agenda 2020 explores the graphical possibilities unlocked by emerging technologies such as variable fonts and augmented reality.

Using augmented reality as a medium, the project communicated ideas through spatial typography, live data, and sculptural installations. Each piece came to life through a custom-developed interactive AR application, specifically created for iOS and designed for the exhibition.

Make sure to download the app and visit the project page for a digital experience of the show.

Agenda 2020 was selected as Best in Book in The Annual 2019, Creative Review’s prestigious award scheme celebrating the best in creativity.

Agenda 2020 explores the graphical possibilities unlocked by emerging technologies such as variable fonts and augmented reality.

Using augmented reality as a medium, the project communicated ideas through spatial typography, live data, and sculptural installations. Each piece came to life through a custom-developed interactive AR application, specifically created for iOS and designed for the exhibition.

Make sure to download the app and visit the project page for a digital experience of the show.

Agenda 2020 was selected as Best in Book in The Annual 2019, Creative Review’s prestigious award scheme celebrating the best in creativity.

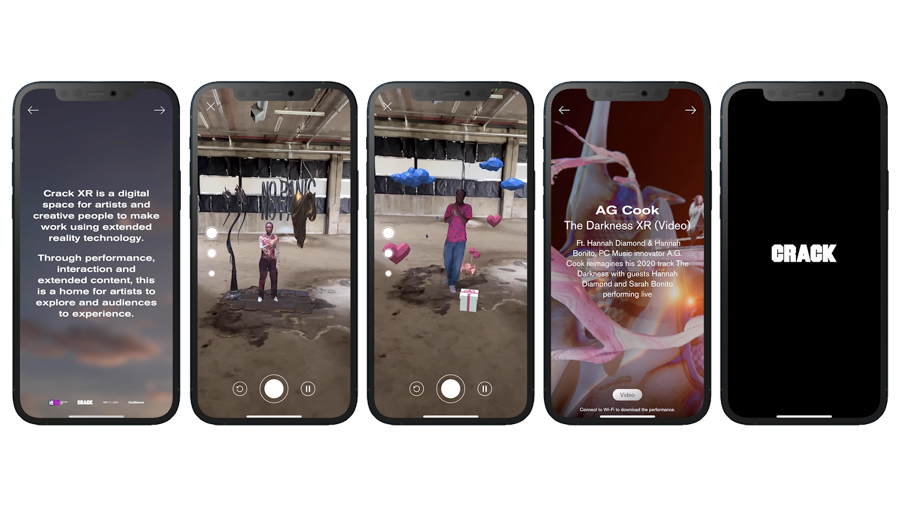

Crack XR

Crack XR is a digital space for artists and creative people to make work using extended reality.

Through performance, interaction, and extended content, this is a home for artists to explore and audiences to experience.

Crack XR is a digital space for artists and creatives to explore and create work using extended reality.

Through performance, interaction, and extended content, Crack XR serves as a home for artists to push boundaries and for audiences to experience groundbreaking work. Crack Magazine received funding from Innovate UK—a government-supported initiative aimed at driving innovation during the COVID-19 pandemic. The project’s goal was to develop an app for hosting experimental performances, powered by volumetric capture technology and driven by the visions of artists and creatives. Today, that app is available to download.

The app features performances such as Lagos-born, Bermondsey-raised rapper Flohio, who brings her kinetic and shapeshifting track Sweet Flaws to life in mesmerizing augmented reality. You can also experience the infectious sounds of rising London rapper Brian Nasty, whose lo-fi love song Heart Emoji comes alive in AR.

Additionally, A.G. Cook (PC Music innovator) reimagines his 2020 track The Darkness, with live performances by guests Hannah Diamond and Sarah Bonito in a hypnotic 3D-rendered video.

The performances on Crack XR were created in collaboration with Good Measure Studio, Ground Work, and Pretty Lucky, with art direction by Udoma Janssen. The 3D design for Flohio and A.G. Cook’s performances was created by London artist George Jasper Stone.

Tech: Unity, C#, Volumetric Filmmaking, 3D Scanning, Augmented Reality

Credits

- Technical Direction + Development: Oliver Ellmers

- Technical Lead: Good Measure Studio

- Project Co-ordination : Crack Magazine

- Production: Ground Work

- 3D Artist + Designer: George Jasper Stone

- Art Direction: Udoma Janssen

Crack XR is a digital space for artists and creatives to explore and create work using extended reality.

Through performance, interaction, and extended content, Crack XR serves as a home for artists to push boundaries and for audiences to experience groundbreaking work. Crack Magazine received funding from Innovate UK—a government-supported initiative aimed at driving innovation during the COVID-19 pandemic. The project’s goal was to develop an app for hosting experimental performances, powered by volumetric capture technology and driven by the visions of artists and creatives. Today, that app is available to download.

The app features performances such as Lagos-born, Bermondsey-raised rapper Flohio, who brings her kinetic and shapeshifting track Sweet Flaws to life in mesmerizing augmented reality. You can also experience the infectious sounds of rising London rapper Brian Nasty, whose lo-fi love song Heart Emoji comes alive in AR.

Additionally, A.G. Cook (PC Music innovator) reimagines his 2020 track The Darkness, with live performances by guests Hannah Diamond and Sarah Bonito in a hypnotic 3D-rendered video.

The performances on Crack XR were created in collaboration with Good Measure Studio, Ground Work, and Pretty Lucky, with art direction by Udoma Janssen. The 3D design for Flohio and A.G. Cook’s performances was created by London artist George Jasper Stone.

Tech: Unity, C#, Volumetric Filmmaking, 3D Scanning, Augmented Reality

Credits

- Technical Direction + Development: Oliver Ellmers

- Technical Lead: Good Measure Studio

- Project Co-ordination : Crack Magazine

- Production: Ground Work

- 3D Artist + Designer: George Jasper Stone

- Art Direction: Udoma Janssen

Diwali

Angus Muir Design was engaged by Spur to design and build an interactive Canopy of Lights for Spark as a way of celebrating Diwali with their staff, customers, and the public.

Angus Muir Design was commissioned by Spur to design and build an interactive Canopy of Lights for Spark to celebrate Diwali with their staff, customers, and the public. The canopy featured 864 custom LED lanterns and a surround-sound audio system integrated into the lights, emphasizing Spark’s partnership with Spotify. Located at the main entrance of Spark’s head office, the public was invited to interact with the installation, controlling the lights and music.

The user’s involvement in the project was to design and develop the interactive control interface using the lemur scripting environment, along with an audio-responsive pattern generator to determine the colors and brightness values displayed on the DMX matrix.

The interactive control system allowed users to bounce, pull, and move balls around a touch device's screen, which illuminated the lights in the DMX matrix. The touch interface was also mapped to a series of sounds, enabling the user to compose musical sequences while interacting with the light matrix.

Tech: TouchDesigner, Lemur

Credits

- Production: Angus Muir Design

- Development: Puck Murphy

Angus Muir Design was commissioned by Spur to design and build an interactive Canopy of Lights for Spark to celebrate Diwali with their staff, customers, and the public. The canopy featured 864 custom LED lanterns and a surround-sound audio system integrated into the lights, emphasizing Spark’s partnership with Spotify. Located at the main entrance of Spark’s head office, the public was invited to interact with the installation, controlling the lights and music.

The user’s involvement in the project was to design and develop the interactive control interface using the lemur scripting environment, along with an audio-responsive pattern generator to determine the colors and brightness values displayed on the DMX matrix.

The interactive control system allowed users to bounce, pull, and move balls around a touch device's screen, which illuminated the lights in the DMX matrix. The touch interface was also mapped to a series of sounds, enabling the user to compose musical sequences while interacting with the light matrix.

Tech: TouchDesigner, Lemur

Credits

- Production: Angus Muir Design

- Development: Puck Murphy

Ukaipō

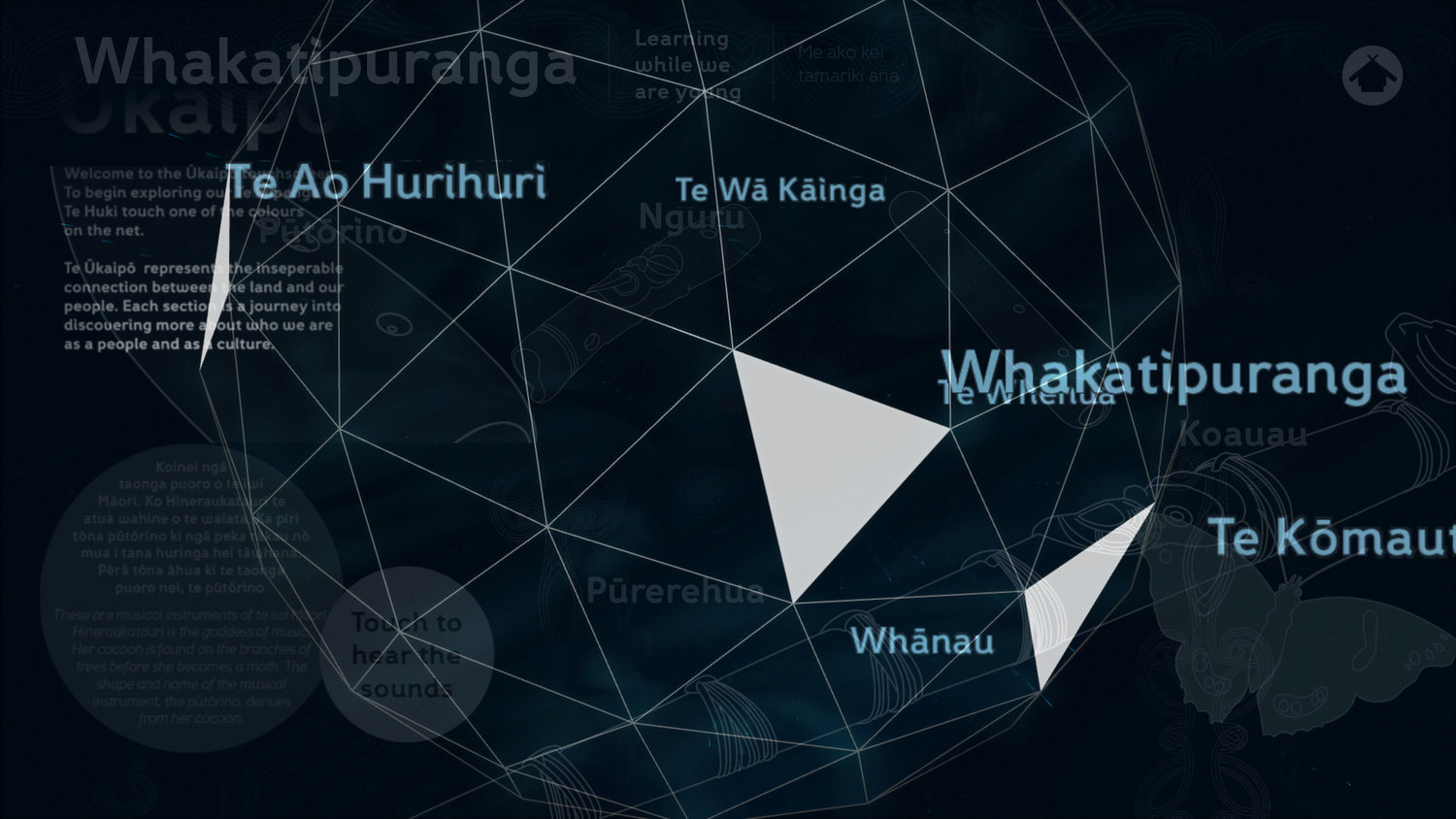

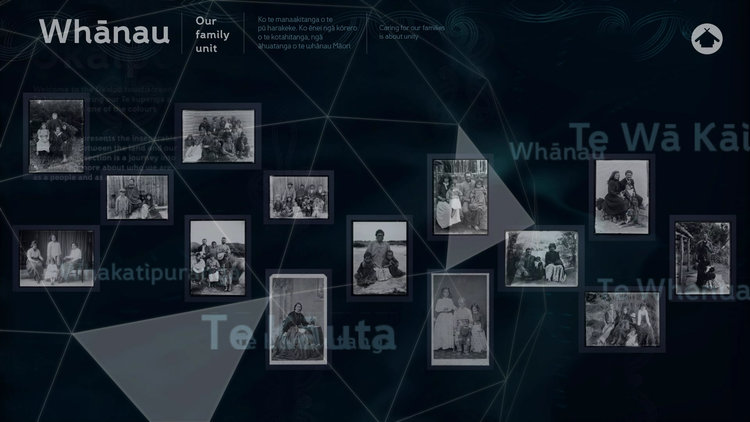

Ukaipō - O Tatou Whakapapa is an exhibition at the MTG Hawke’s Bay that celebrates traditional Māori culture and history, specifically in the Hawke’s Bay area of New Zealand. The term "Ukaipō" translates to "being fed at the breast at night," symbolizing identity, homeland, upbringing, and maternal connections. It reflects a deep cultural understanding of the bond between individuals and their heritage, representing a connection to one's roots and the nurturing of past generations.

Ukaipō - O Tatou Whakapapa is an exhibition at the MTG Hawke’s Bay that celebrates traditional Māori culture and history in New Zealand, specifically in the Hawke’s Bay area. The name "Ukaipō" refers to the concept of "being fed at the breast at night" and symbolizes identity, home, upbringing, and maternal connections. It is a deeply personal and cultural reflection of visiting one’s homeland, recalling childhood memories, and remembering past generations.

The design brief for the project was to create an interactive world based on cultural assets, allowing users to explore and discover pieces of information that expand upon their understanding of the exhibition space.

Drawing inspiration from the literal meaning of Ukaipō, the team constructed a visual narrative that encapsulated the mysterious and somewhat eerie feeling of being fed under the night sky. The journey begins on a screen showing a faceted net, bobbing in a stream beneath a dark sky.

Given the exhibition's focus on the Ruakituri River, the design was informed by the idea of ‘catching’ or ‘gathering’ cultural knowledge within a fishing net. The net itself served as both a navigation system and a map, guiding users through pockets of information held within its grasp.

Ukaipō was a finalist in The Best Design Awards 2014 by the Designers Institute of New Zealand.

For more information, visit the Best Awards – New Zealand and MTG Hawkes Bay.

Ukaipō - O Tatou Whakapapa is an exhibition at the MTG Hawke’s Bay that celebrates traditional Māori culture and history in New Zealand, specifically in the Hawke’s Bay area. The name "Ukaipō" refers to the concept of "being fed at the breast at night" and symbolizes identity, home, upbringing, and maternal connections. It is a deeply personal and cultural reflection of visiting one’s homeland, recalling childhood memories, and remembering past generations.

The design brief for the project was to create an interactive world based on cultural assets, allowing users to explore and discover pieces of information that expand upon their understanding of the exhibition space.

Drawing inspiration from the literal meaning of Ukaipō, the team constructed a visual narrative that encapsulated the mysterious and somewhat eerie feeling of being fed under the night sky. The journey begins on a screen showing a faceted net, bobbing in a stream beneath a dark sky.

Given the exhibition's focus on the Ruakituri River, the design was informed by the idea of ‘catching’ or ‘gathering’ cultural knowledge within a fishing net. The net itself served as both a navigation system and a map, guiding users through pockets of information held within its grasp.

Ukaipō was a finalist in The Best Design Awards 2014 by the Designers Institute of New Zealand.

For more information, visit the Best Awards – New Zealand and MTG Hawkes Bay.

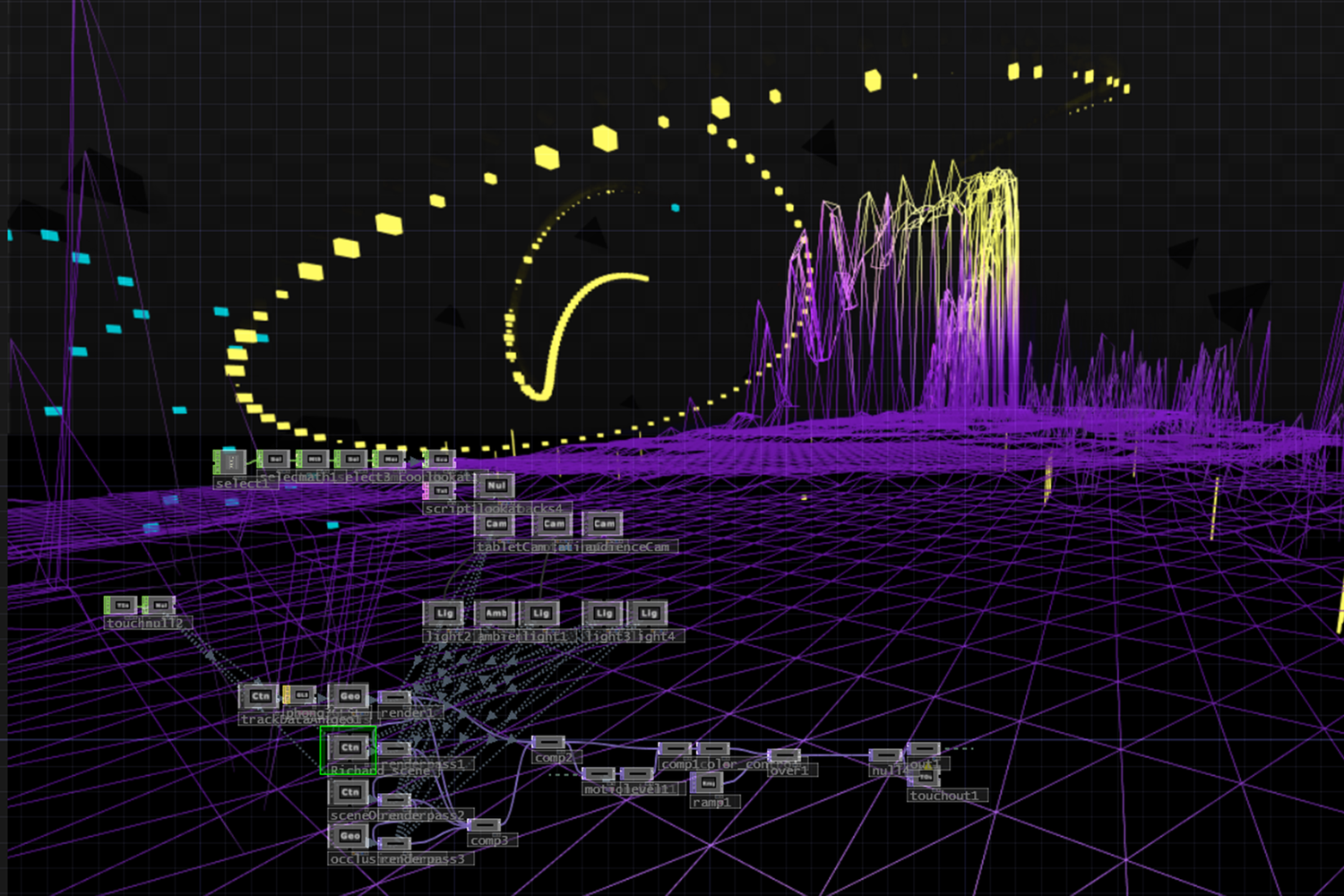

Choreographic Coding Lab

The Choreographic Coding Lab [CCL] format offers unique opportunities for exchange and collaboration for digital media ‘code savvy’ artists who have an interest in translating aspects of choreography and dance into digital form and applying choreographic thinking to their own practice.

The Choreographic Coding Lab (CCL) format offers a unique opportunity for exchange and collaboration among digital media artists skilled in coding who are interested in translating aspects of choreography and dance into digital forms. It also encourages the application of choreographic thinking to their own practice. More information on CCL can be found here.

In 2015, Oliver participated as part of a team alongside Richard De Souza, Peter Walker, Steven Kallili, and Michael Havir in the CCL X Motion Lab at Deakin University’s Burwood Campus in Melbourne. Together, they developed a prototype for a real-time augmented motion capture visualization system. This system streamed motion capture data to visualize movement in 3D, augmenting the performer and allowing users to interact with the visualizations. The interaction enabled the user to experience the digital performance from various perspectives, offering control over certain aspects of the visualizations.

The system achieved correct perspective and scale alignment by tracking the positional information of the device (in this case, a tablet) running the app, applying this data to a 3D virtual camera that viewed the motion capture data visualizations.

The entire application was designed and developed in TouchDesigner, with each team member contributing their specialized skills to the framework: Peter focused on data interpretation, Richard developed the interactive interface for the tablet, Oliver handled integration and visualization, Michael worked on sound development, and Stephen was responsible for performance.

At the end of the lab, the team had a functioning prototype that blended digital and physical interactive performance, as well as a broader understanding of the high-end motion capture industry and how to work with its data.

Tech: TouchDesigner, Motion Capture

Credits

- TouchDesigner Developer: Richard De Sousza

- TouchDesigner Developer: Peter Walker

- Performance Artist: Steven Kallili

- Sound Designer: Michael Havir

The Choreographic Coding Lab (CCL) format offers a unique opportunity for exchange and collaboration among digital media artists skilled in coding who are interested in translating aspects of choreography and dance into digital forms. It also encourages the application of choreographic thinking to their own practice. More information on CCL can be found here.

In 2015, Oliver participated as part of a team alongside Richard De Souza, Peter Walker, Steven Kallili, and Michael Havir in the CCL X Motion Lab at Deakin University’s Burwood Campus in Melbourne. Together, they developed a prototype for a real-time augmented motion capture visualization system. This system streamed motion capture data to visualize movement in 3D, augmenting the performer and allowing users to interact with the visualizations. The interaction enabled the user to experience the digital performance from various perspectives, offering control over certain aspects of the visualizations.

The system achieved correct perspective and scale alignment by tracking the positional information of the device (in this case, a tablet) running the app, applying this data to a 3D virtual camera that viewed the motion capture data visualizations.

The entire application was designed and developed in TouchDesigner, with each team member contributing their specialized skills to the framework: Peter focused on data interpretation, Richard developed the interactive interface for the tablet, Oliver handled integration and visualization, Michael worked on sound development, and Stephen was responsible for performance.

At the end of the lab, the team had a functioning prototype that blended digital and physical interactive performance, as well as a broader understanding of the high-end motion capture industry and how to work with its data.

Tech: TouchDesigner, Motion Capture

Credits

- TouchDesigner Developer: Richard De Sousza

- TouchDesigner Developer: Peter Walker

- Performance Artist: Steven Kallili

- Sound Designer: Michael Havir

Yours To Make: Fluid Imaginarium

As part of Instagram's global Yours To Make platform, Instagram hosted an exhibition at Saatchi Gallery London in collaboration with studio HERVISIONS from November 4th to 9th, 2021. The motion artwork, created using gaming technology, celebrated British youth culture in 2021. It was inspired by and made from Instagram Reels posted by 50 exciting, emerging British Gen Z creators.

As part of Instagram's global Yours To Make platform, Instagram hosted an exhibition at Saatchi Gallery London in collaboration with studio HERVISIONS from November 4th to 9th, 2021. The motion artwork, created using gaming technology, celebrated British youth culture in 2021, inspired by and made from Instagram Reels posted by 50 exciting, emerging British Gen Z creators.

Yours to Make: Fluid Imaginarium took visitors on a journey through multiple dimensions, guided by digitally-created hybrid characters that represented different facets of Gen Z self-expression on Instagram, such as community connection, experimentation, and exploration.

Tech: Unity 3D

Credits

- HERVISIONS: Zaiba Jabbar

- Technical Director | Unity Developer | Technical Artist: Oliver Ellmers

As part of Instagram's global Yours To Make platform, Instagram hosted an exhibition at Saatchi Gallery London in collaboration with studio HERVISIONS from November 4th to 9th, 2021. The motion artwork, created using gaming technology, celebrated British youth culture in 2021, inspired by and made from Instagram Reels posted by 50 exciting, emerging British Gen Z creators.

Yours to Make: Fluid Imaginarium took visitors on a journey through multiple dimensions, guided by digitally-created hybrid characters that represented different facets of Gen Z self-expression on Instagram, such as community connection, experimentation, and exploration.

Tech: Unity 3D

Credits

- HERVISIONS: Zaiba Jabbar

- Technical Director | Unity Developer | Technical Artist: Oliver Ellmers

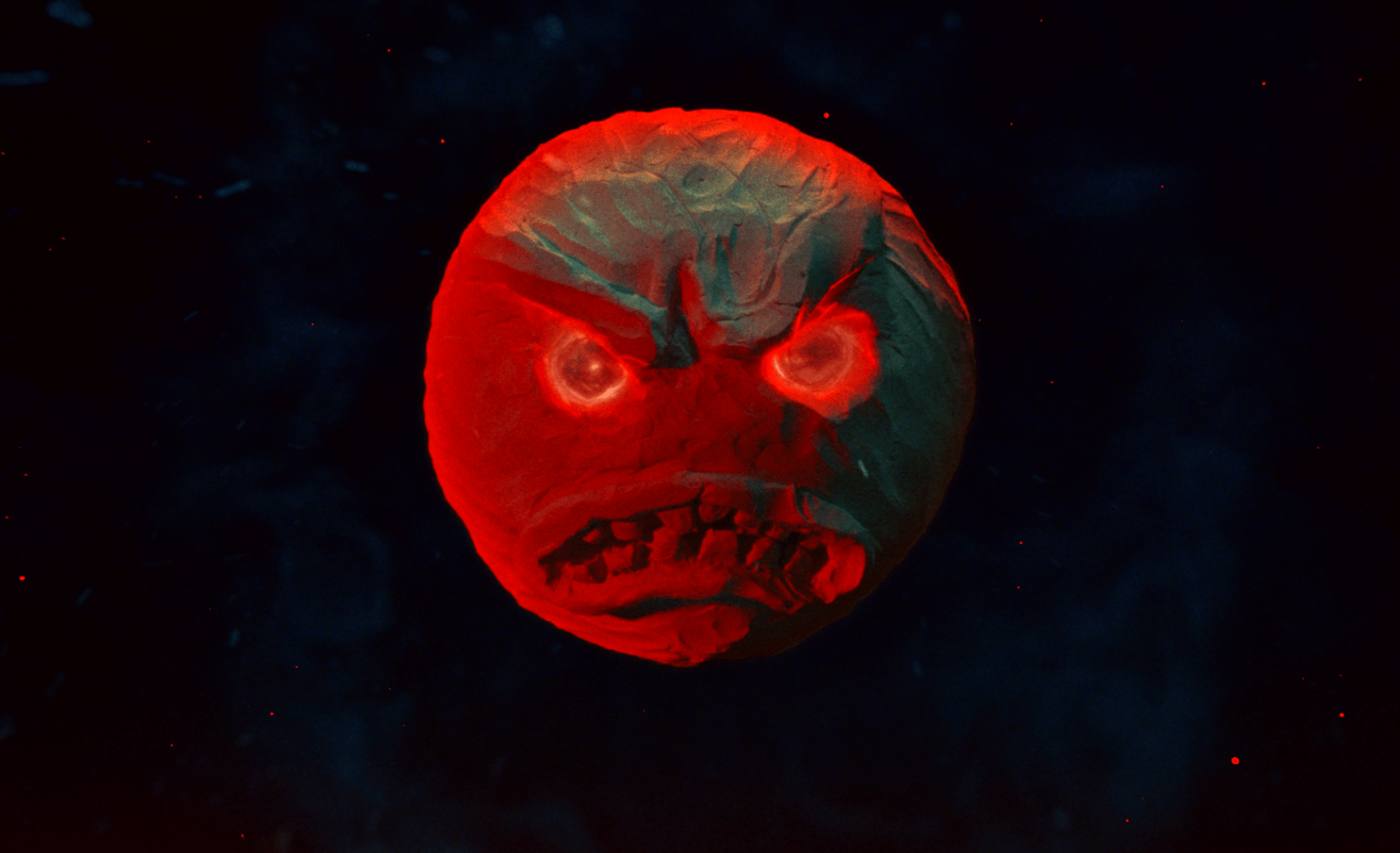

MOON

'MOON' starring Brian Nasty and featuring music by Brian Nasty.

'MOON' starring Brian Nasty and featuring music by Brian Nasty.

Featured on NOWNESS Picks!

Tech: Notch, Unity, Adobe CC

Credits

- VFX & Post Production: Oliver Ellmers

- Writer & Director: Luke Casey

- Executive Producer: Aaron Willson

- DOP: Harry Wheeler

- Production Designer: Danny Hyland

- Production Assistant: Anna Butler

- Stylist: Taff Williamson

- HMU: Chloe Amber Rose

- Sound Design: Oliver Mapp

- Grade: Tim Smith

- Contributing Writer: Huei Lin

'MOON' starring Brian Nasty and featuring music by Brian Nasty.

Featured on NOWNESS Picks!

Tech: Notch, Unity, Adobe CC

Credits

- VFX & Post Production: Oliver Ellmers

- Writer & Director: Luke Casey

- Executive Producer: Aaron Willson

- DOP: Harry Wheeler

- Production Designer: Danny Hyland

- Production Assistant: Anna Butler

- Stylist: Taff Williamson

- HMU: Chloe Amber Rose

- Sound Design: Oliver Mapp

- Grade: Tim Smith

- Contributing Writer: Huei Lin